Downstream Analysis with flashscenic#

This tutorial demonstrates how to use flashscenic for gene regulatory network (GRN) analysis and downstream biological interpretation. We use the Immune_ALL_human dataset from the scIB benchmark (Luecken et al. 2022): 33,506 cells across 16 immune and hematopoietic cell types from 10 batches.

What you’ll learn:

Running the flashscenic pipeline (one function call)

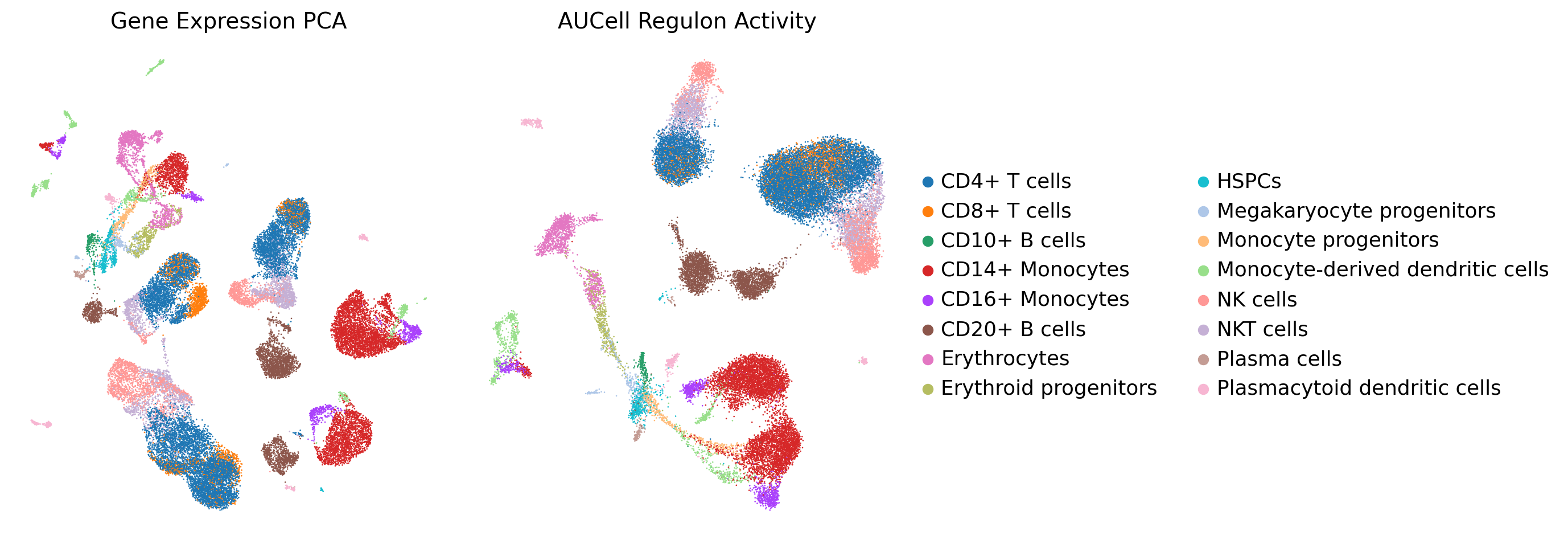

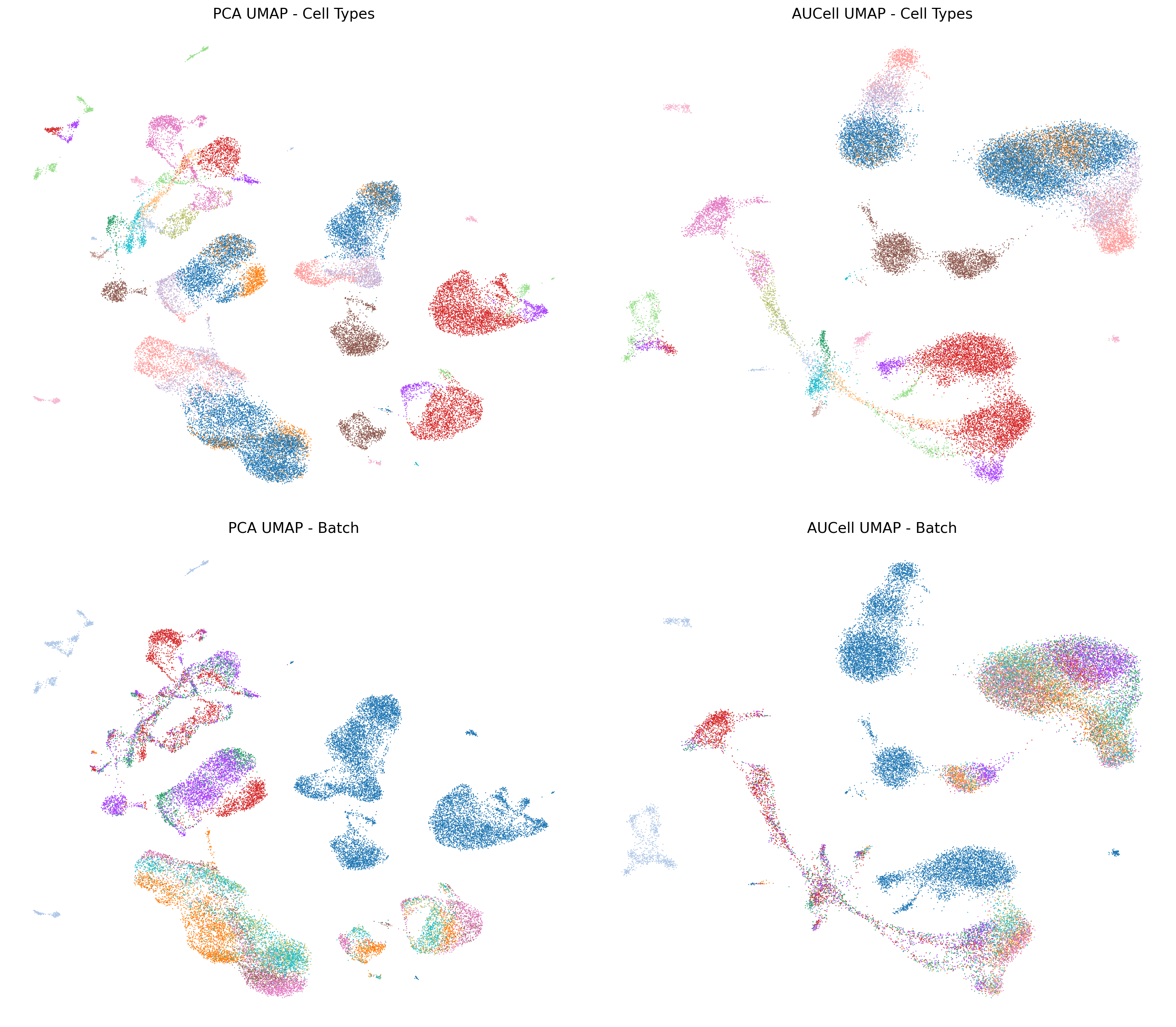

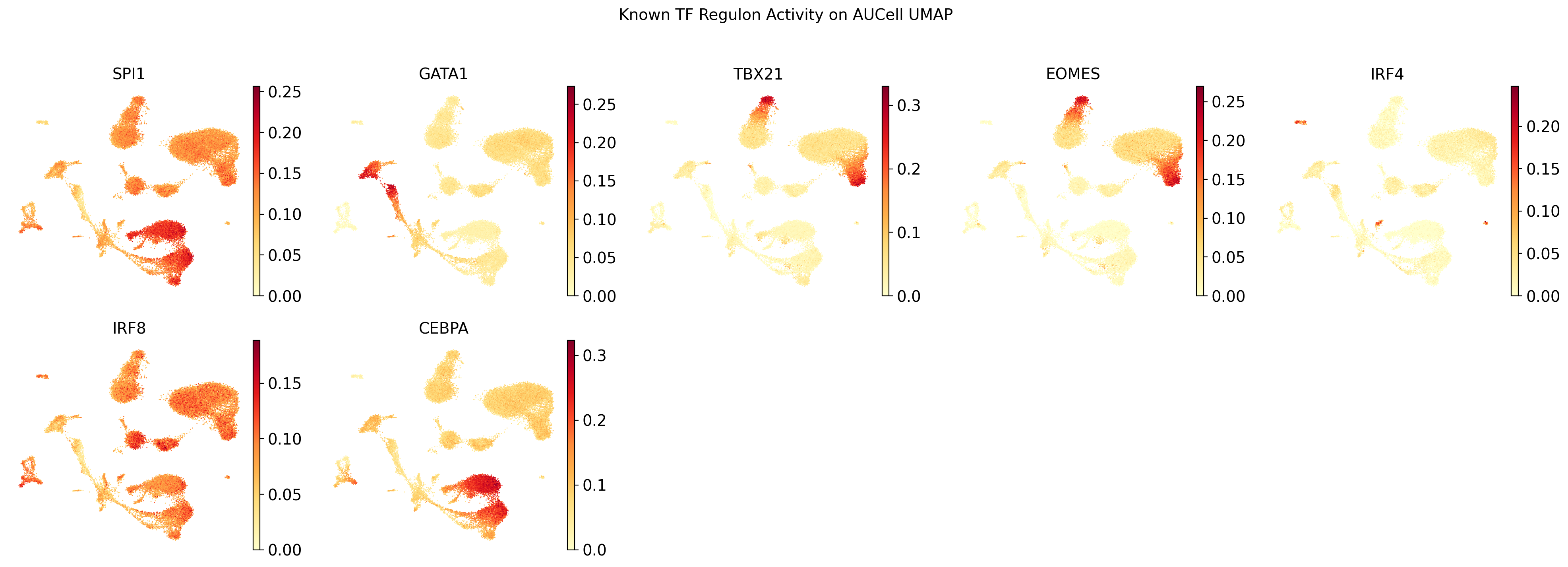

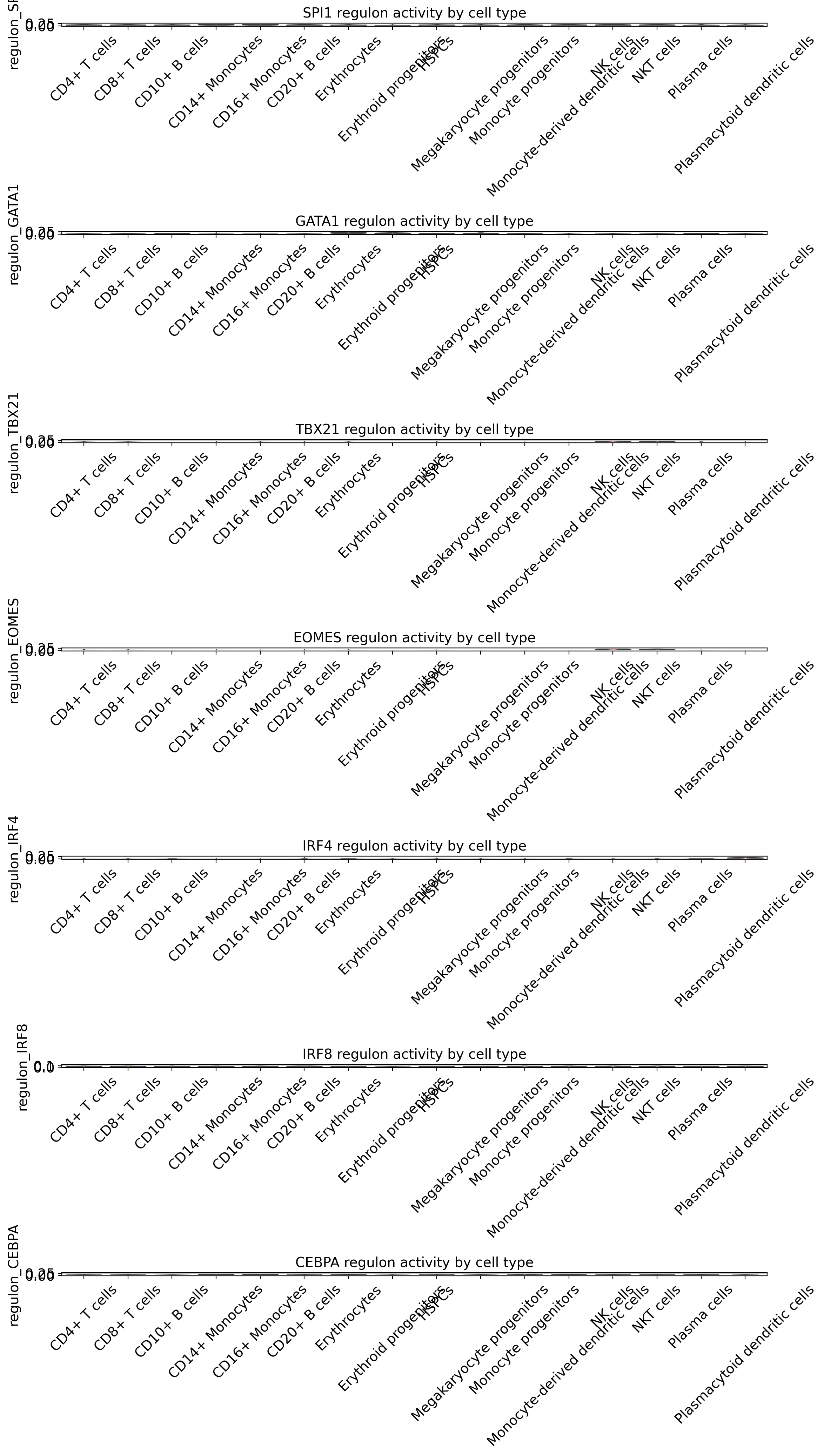

Visualizing regulon activity with UMAP – and why it naturally handles batch effects

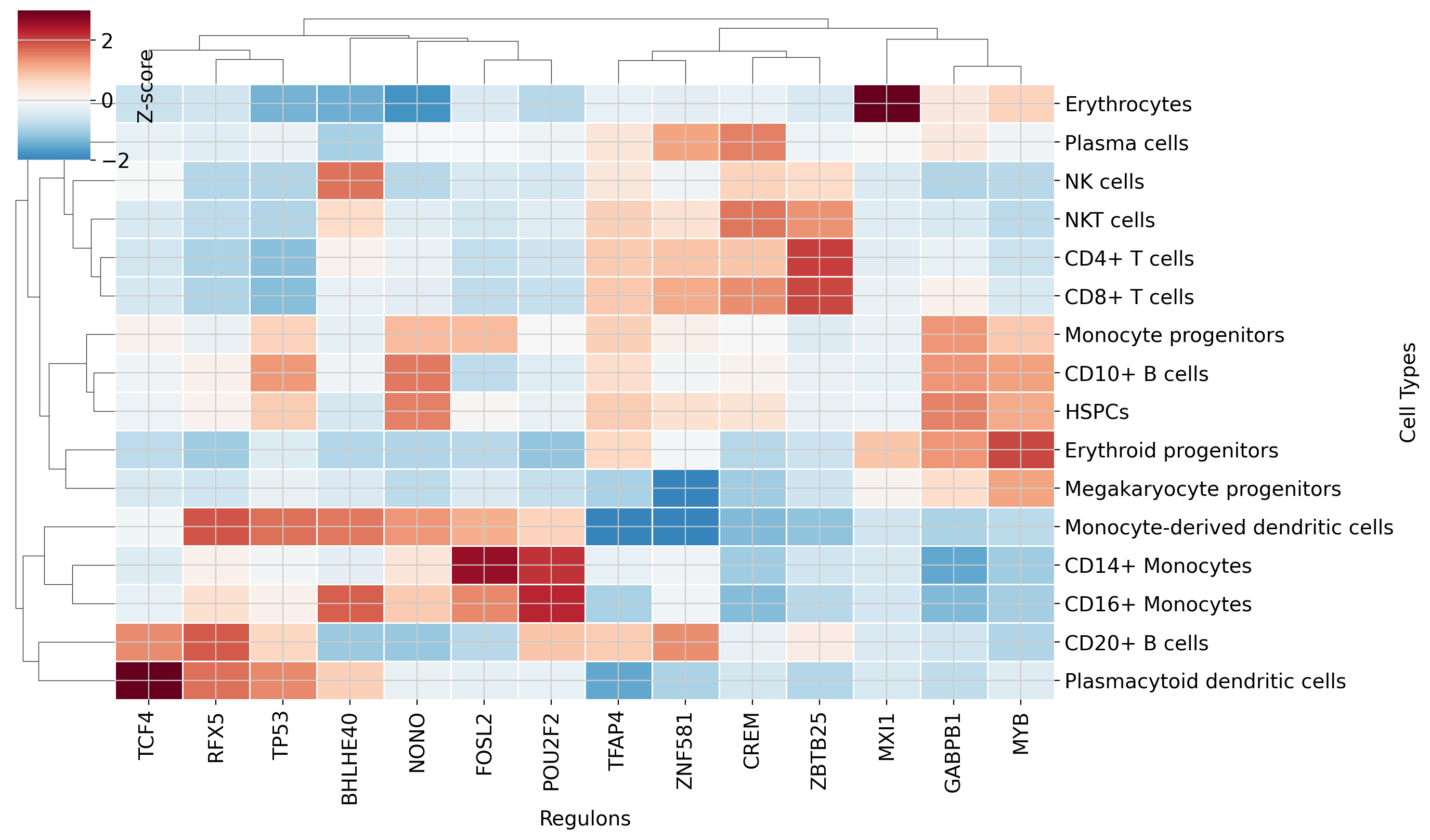

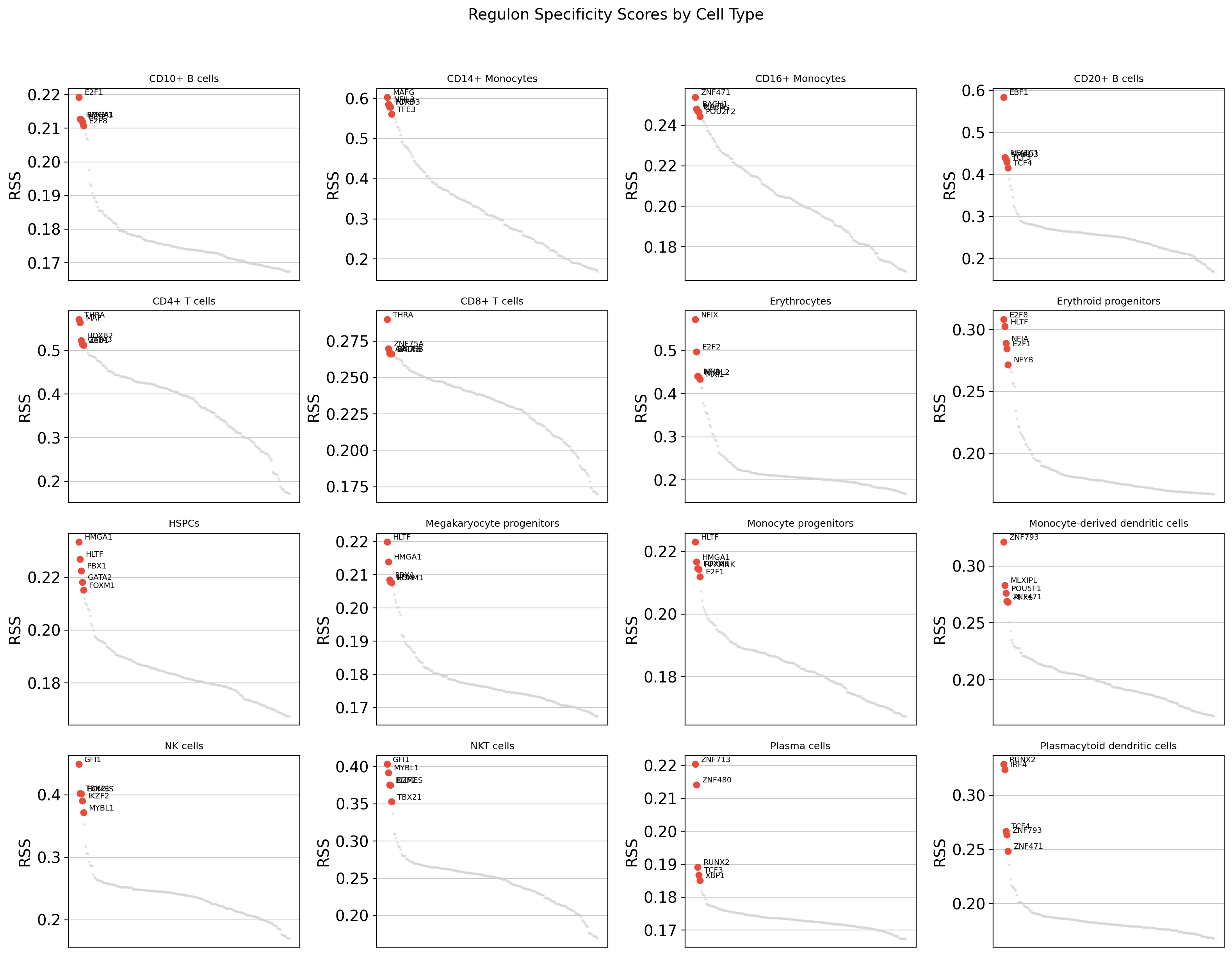

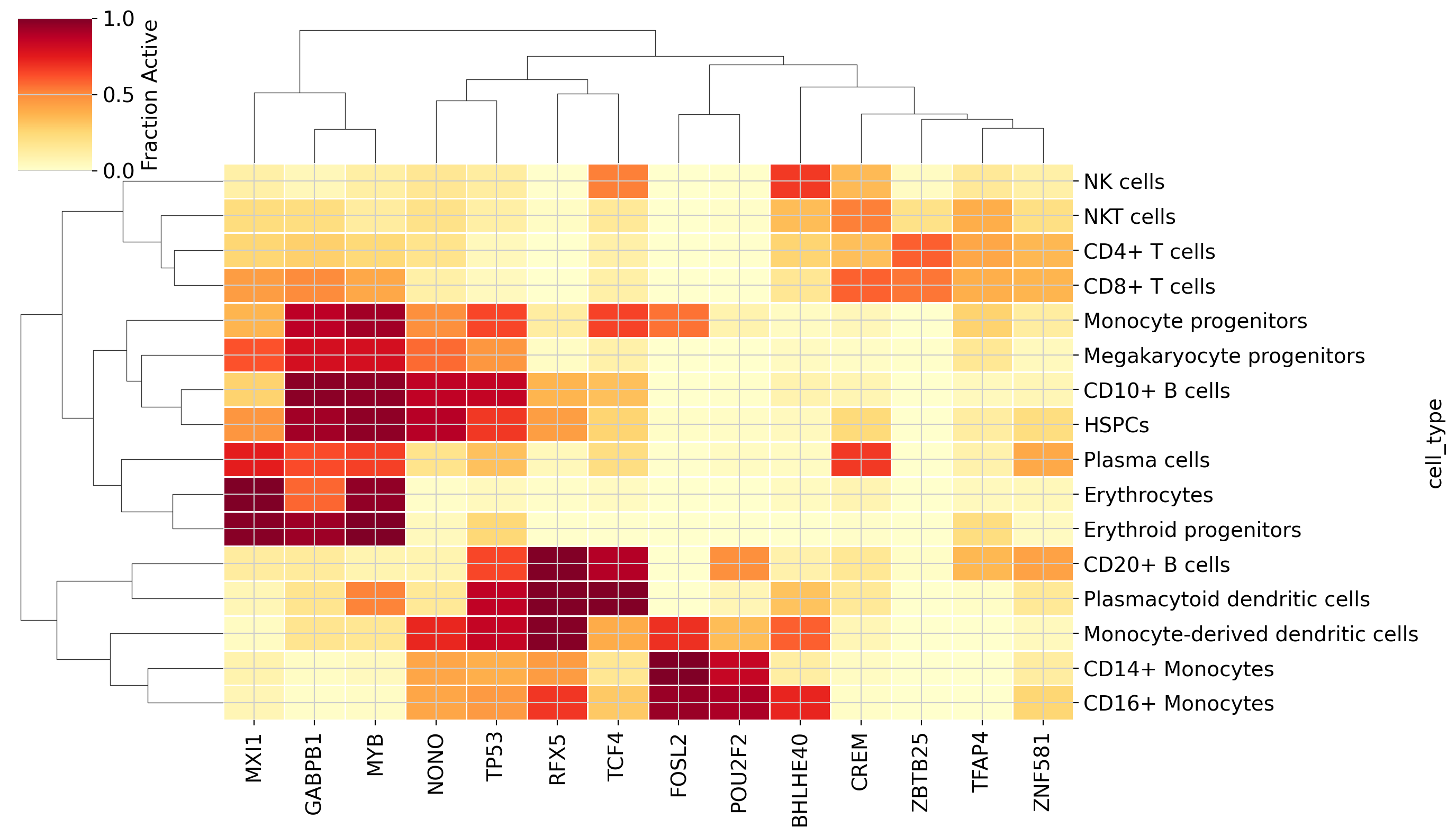

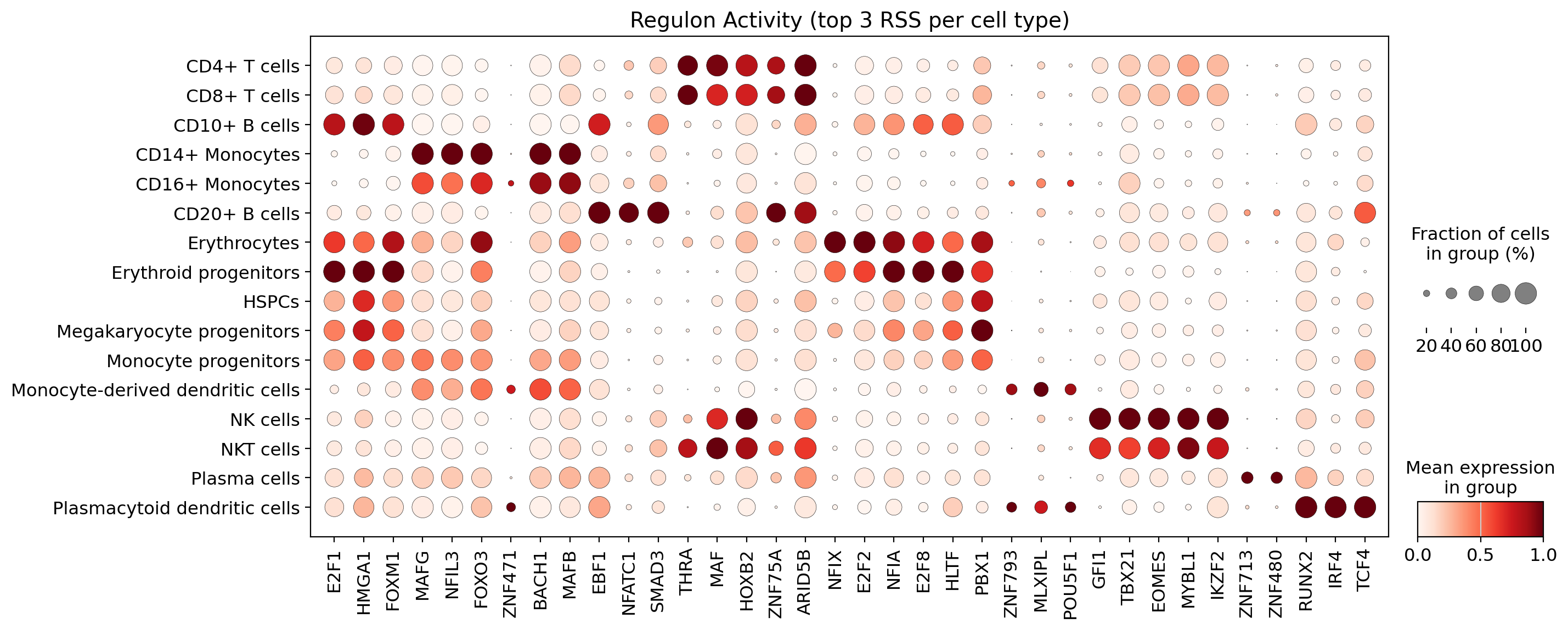

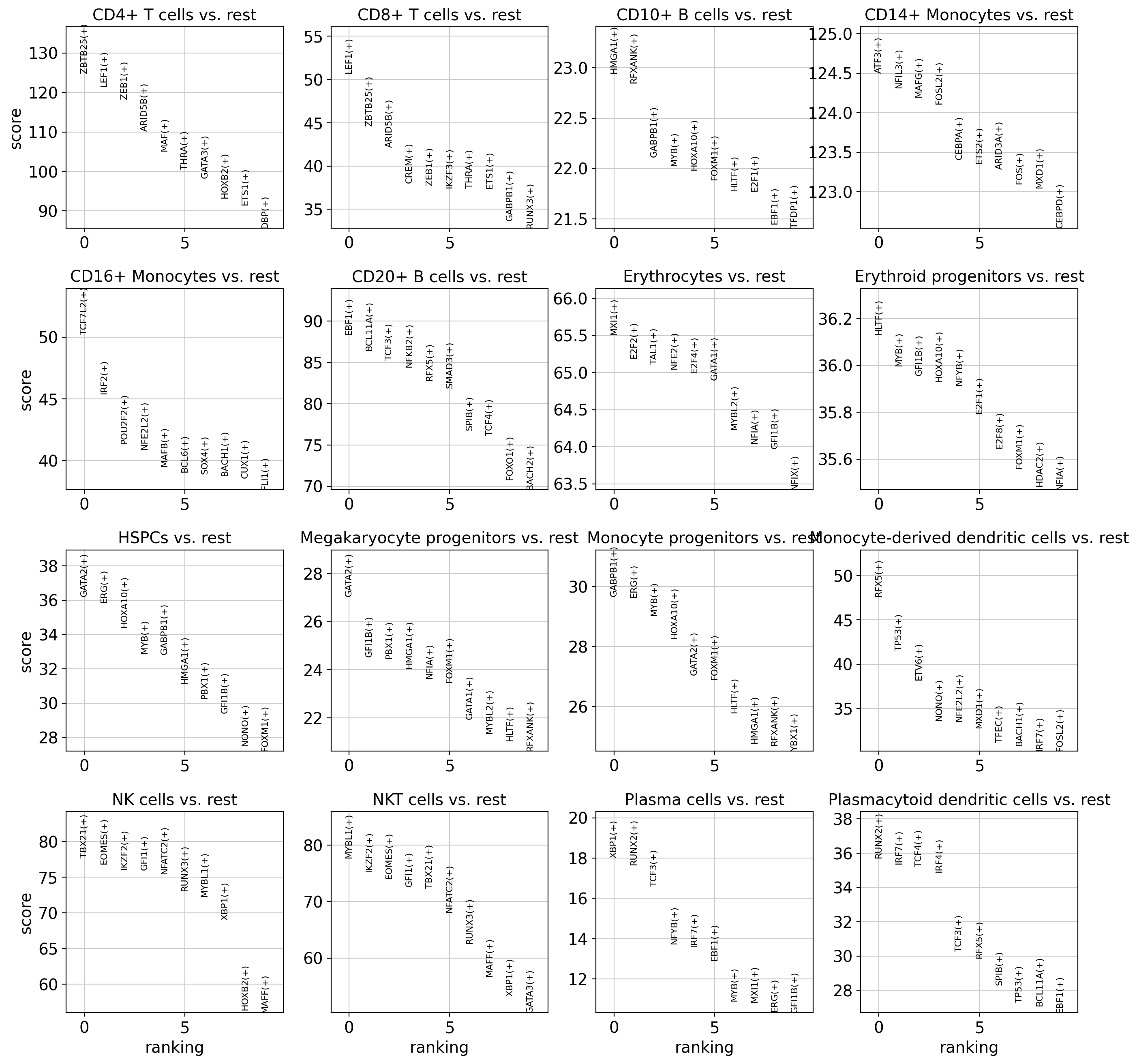

Identifying cell-type-specific regulons with Regulon Specificity Scores (RSS)

Validating results against known immune biology (PAX5 in B cells, SPI1 in monocytes, etc.)

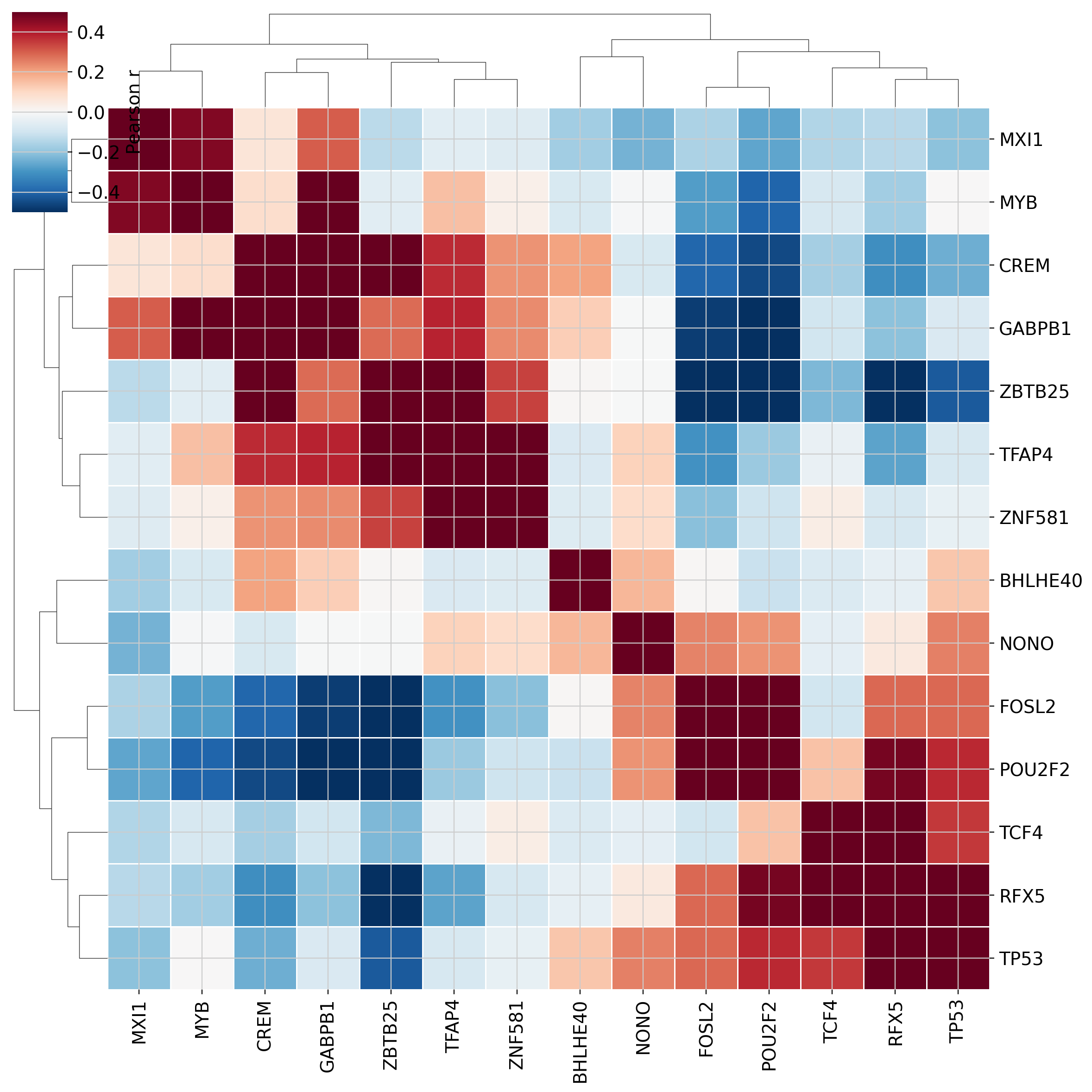

Differential regulon activity analysis

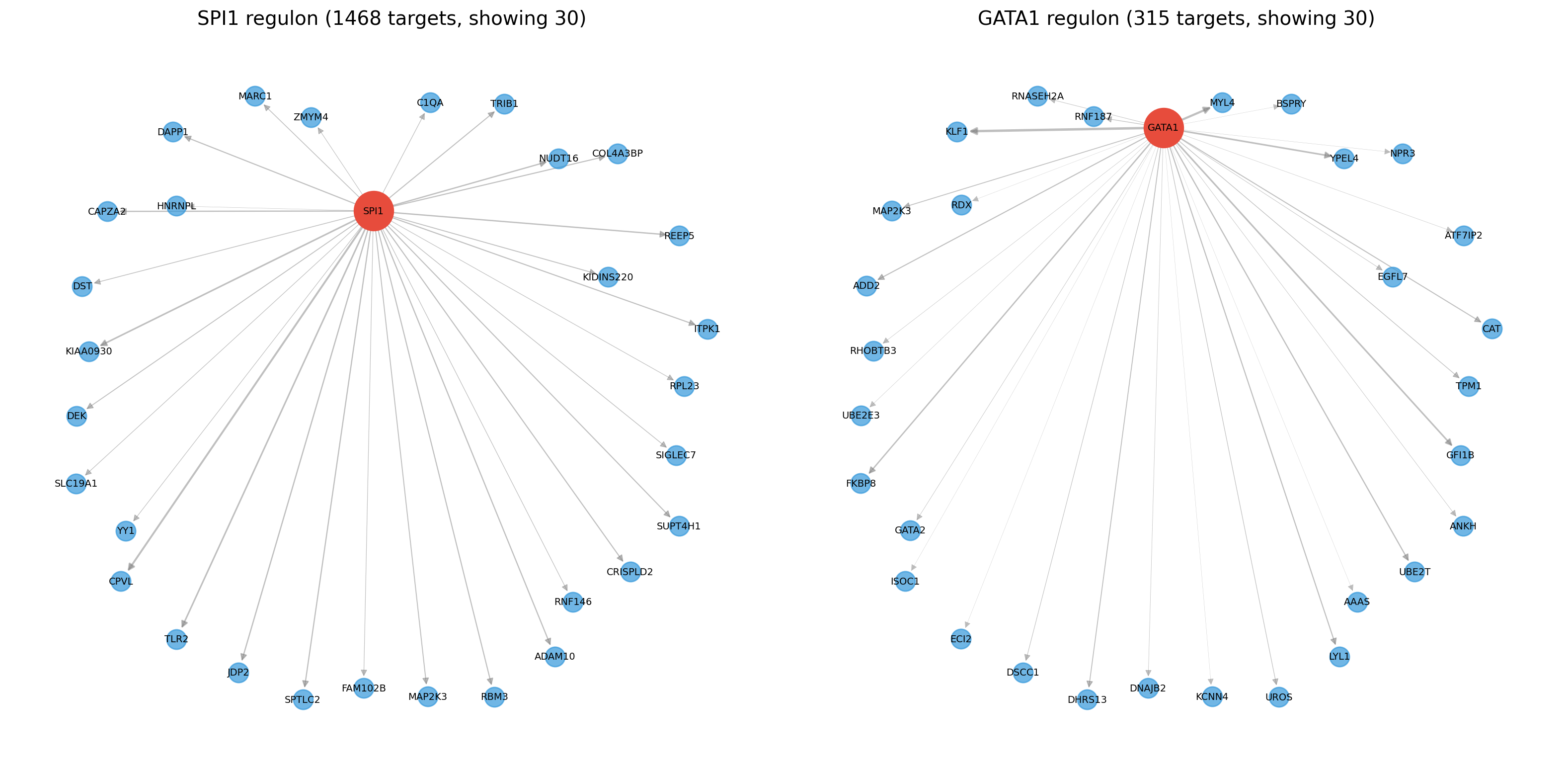

Exploring TF-target regulatory networks

Requirements: flashscenic[tutorial] (includes scanpy, matplotlib, seaborn, networkx)

GPU: A CUDA-capable GPU is recommended. The pipeline takes ~2 minutes on a modern GPU; downstream analyses are CPU-only.

1. Setup and Data Loading#

import numpy as np

import pandas as pd

import scanpy as sc

import matplotlib.pyplot as plt

import seaborn as sns

import anndata

import warnings

warnings.filterwarnings('ignore')

import flashscenic as fs

sc.settings.set_figure_params(dpi=100, frameon=False, figsize=(4, 4))

plt.rcParams['figure.dpi'] = 100

Load the dataset#

We use the Immune_ALL_human dataset from the scIB benchmark, containing PBMC and bone marrow cells from 5 studies (10 batches) and 16 annotated cell types spanning lymphoid, myeloid, erythroid, and progenitor populations.

Download from: https://figshare.com/ndownloader/files/25717328

DATA_PATH = '../experiments/data/Immune_ALL_human.h5ad'

adata = sc.read_h5ad(DATA_PATH)

print(f"Dataset: {adata.shape[0]:,} cells x {adata.shape[1]:,} genes")

print(f"Cell types: {adata.obs['final_annotation'].nunique()}")

print(f"Batches: {adata.obs['batch'].nunique()}")

print()

print(adata.obs['final_annotation'].value_counts())

Dataset: 33,506 cells x 12,303 genes

Cell types: 16

Batches: 10

final_annotation

CD4+ T cells 11011

CD14+ Monocytes 6483

CD20+ B cells 2873

NKT cells 2745

NK cells 2294

CD8+ T cells 2183

Erythrocytes 1502

Monocyte-derived dendritic cells 1012

CD16+ Monocytes 997

HSPCs 473

Erythroid progenitors 463

Plasmacytoid dendritic cells 436

Monocyte progenitors 428

Megakaryocyte progenitors 270

CD10+ B cells 207

Plasma cells 129

Name: count, dtype: int64

Preprocessing#

flashscenic needs a dense expression matrix with no zero-variance genes. We also filter to genes expressed in at least 10 cells.

from scipy.sparse import issparse

# Filter genes: keep genes expressed in > 10 cells

if issparse(adata.X):

cells_per_gene = np.array((adata.X > 0).sum(axis=0)).flatten()

else:

cells_per_gene = (adata.X > 0).sum(axis=0)

gene_mask = cells_per_gene > 10

adata = adata[:, gene_mask].copy()

print(f"After gene filter (>10 cells): {adata.shape}")

# Densify sparse matrix

if issparse(adata.X):

adata.X = adata.X.toarray()

# Remove zero-variance genes

gene_var = np.var(adata.X, axis=0)

nonzero_mask = gene_var > 0

n_removed = (~nonzero_mask).sum()

if n_removed > 0:

print(f"Removing {n_removed} zero-variance genes")

adata = adata[:, nonzero_mask].copy()

print(f"Final shape: {adata.shape}")

gene_names = adata.var_names.tolist()

exp_matrix = adata.X.astype(np.float32)

After gene filter (>10 cells): (33506, 12282)

Final shape: (33506, 12282)

Compute PCA baseline#

We compute a PCA-based UMAP first so we can compare it against the AUCell-based UMAP later.

sc.tl.pca(adata, n_comps=30)

sc.pp.neighbors(adata, n_neighbors=15, use_rep='X_pca')

sc.tl.umap(adata)

adata.obsm['X_umap_pca'] = adata.obsm['X_umap'].copy()

2. Running flashscenic#

The entire SCENIC pipeline – GRN inference (RegDiffusion), module filtering, cisTarget pruning, and AUCell scoring – runs in a single function call.

result = fs.run_flashscenic(

exp_matrix, gene_names,

species='human',

device='cuda',

seed=42,

verbose=True,

)

# Unpack results

auc_scores = result['auc_scores'] # (n_cells, n_regulons)

regulon_names = result['regulon_names'] # list of str

regulons = result['regulons'] # list of dicts

regulon_adj = result['regulon_adj'] # (n_regulons, n_genes) binary

print(f"\nFound {len(regulon_names)} regulons")

print(f"AUCell scores shape: {auc_scores.shape}")

print(f"Example regulons: {regulon_names[:10]}")

[flashscenic] Step 0/5: Preparing resources...

Downloading scenic/human/v10 resources to flashscenic_data/

[Human TF list (hg38)] Already cached: allTFs_hg38.txt

[Human 500bp/100bp ranking database (v10)] Already cached: hg38_500bp_up_100bp_down_full_tx_v10_clust.genes_vs_motifs.rankings.feather

[Human 10kbp ranking database (v10)] Already cached: hg38_10kbp_up_10kbp_down_full_tx_v10_clust.genes_vs_motifs.rankings.feather

[Human motif annotations (v10)] Already cached: motifs-v10nr_clust-nr.hgnc-m0.001-o0.0.tbl

Download complete.

[flashscenic] Step 1/5: Running RegDiffusion GRN inference (33506 cells, 12282 genes, 1000 steps)...

0%| | 0/1000 [00:00<?, ?it/s]

Training loss: 1.576, Change on Adj: -0.020: 0%| | 0/1000 [00:02<?, ?it/s]

Training loss: 1.576, Change on Adj: -0.020: 0%| | 1/1000 [00:02<34:39, 2.08s/it]

Training loss: 1.298, Change on Adj: -0.018: 0%| | 1/1000 [00:03<34:39, 2.08s/it]

Training loss: 1.298, Change on Adj: -0.018: 0%| | 2/1000 [00:03<26:03, 1.57s/it]

Training loss: 1.140, Change on Adj: -0.016: 0%| | 2/1000 [00:03<26:03, 1.57s/it]

Training loss: 1.070, Change on Adj: -0.015: 0%| | 2/1000 [00:03<26:03, 1.57s/it]

Training loss: 1.070, Change on Adj: -0.015: 0%| | 4/1000 [00:03<10:35, 1.57it/s]

Training loss: 1.039, Change on Adj: -0.015: 0%| | 4/1000 [00:03<10:35, 1.57it/s]

Training loss: 1.042, Change on Adj: -0.014: 0%| | 4/1000 [00:03<10:35, 1.57it/s]

Training loss: 1.042, Change on Adj: -0.014: 1%| | 6/1000 [00:03<06:12, 2.67it/s]

Training loss: 1.061, Change on Adj: -0.014: 1%| | 6/1000 [00:03<06:12, 2.67it/s]

Training loss: 1.075, Change on Adj: -0.014: 1%| | 6/1000 [00:03<06:12, 2.67it/s]

Training loss: 1.075, Change on Adj: -0.014: 1%| | 8/1000 [00:03<04:12, 3.93it/s]

Training loss: 1.065, Change on Adj: -0.014: 1%| | 8/1000 [00:03<04:12, 3.93it/s]

Training loss: 1.057, Change on Adj: -0.015: 1%| | 8/1000 [00:03<04:12, 3.93it/s]

Training loss: 1.057, Change on Adj: -0.015: 1%| | 10/1000 [00:03<03:07, 5.27it/s]

Training loss: 1.055, Change on Adj: -0.015: 1%| | 10/1000 [00:04<03:07, 5.27it/s]

Training loss: 1.029, Change on Adj: -0.015: 1%| | 10/1000 [00:04<03:07, 5.27it/s]

Training loss: 1.029, Change on Adj: -0.015: 1%| | 12/1000 [00:04<02:30, 6.56it/s]

Training loss: 1.004, Change on Adj: -0.014: 1%| | 12/1000 [00:04<02:30, 6.56it/s]

Training loss: 0.984, Change on Adj: -0.014: 1%| | 12/1000 [00:04<02:30, 6.56it/s]

Training loss: 0.984, Change on Adj: -0.014: 1%|▏ | 14/1000 [00:04<02:08, 7.67it/s]

Training loss: 0.966, Change on Adj: -0.014: 1%|▏ | 14/1000 [00:04<02:08, 7.67it/s]

Training loss: 0.954, Change on Adj: -0.014: 1%|▏ | 14/1000 [00:04<02:08, 7.67it/s]

Training loss: 0.954, Change on Adj: -0.014: 2%|▏ | 16/1000 [00:04<01:54, 8.62it/s]

Training loss: 0.952, Change on Adj: -0.013: 2%|▏ | 16/1000 [00:04<01:54, 8.62it/s]

Training loss: 0.940, Change on Adj: -0.013: 2%|▏ | 16/1000 [00:04<01:54, 8.62it/s]

Training loss: 0.940, Change on Adj: -0.013: 2%|▏ | 18/1000 [00:04<01:44, 9.41it/s]

Training loss: 0.941, Change on Adj: -0.013: 2%|▏ | 18/1000 [00:04<01:44, 9.41it/s]

Training loss: 0.945, Change on Adj: -0.013: 2%|▏ | 18/1000 [00:04<01:44, 9.41it/s]

Training loss: 0.945, Change on Adj: -0.013: 2%|▏ | 20/1000 [00:04<01:37, 10.03it/s]

Training loss: 0.942, Change on Adj: -0.012: 2%|▏ | 20/1000 [00:04<01:37, 10.03it/s]

Training loss: 0.931, Change on Adj: -0.012: 2%|▏ | 20/1000 [00:04<01:37, 10.03it/s]

Training loss: 0.931, Change on Adj: -0.012: 2%|▏ | 22/1000 [00:04<01:33, 10.51it/s]

Training loss: 0.926, Change on Adj: -0.012: 2%|▏ | 22/1000 [00:05<01:33, 10.51it/s]

Training loss: 0.920, Change on Adj: -0.012: 2%|▏ | 22/1000 [00:05<01:33, 10.51it/s]

Training loss: 0.920, Change on Adj: -0.012: 2%|▏ | 24/1000 [00:05<01:29, 10.86it/s]

Training loss: 0.912, Change on Adj: -0.012: 2%|▏ | 24/1000 [00:05<01:29, 10.86it/s]

Training loss: 0.897, Change on Adj: -0.012: 2%|▏ | 24/1000 [00:05<01:29, 10.86it/s]

Training loss: 0.897, Change on Adj: -0.012: 3%|▎ | 26/1000 [00:05<01:27, 11.12it/s]

Training loss: 0.893, Change on Adj: -0.012: 3%|▎ | 26/1000 [00:05<01:27, 11.12it/s]

Training loss: 0.887, Change on Adj: -0.012: 3%|▎ | 26/1000 [00:05<01:27, 11.12it/s]

Training loss: 0.887, Change on Adj: -0.012: 3%|▎ | 28/1000 [00:05<01:26, 11.30it/s]

Training loss: 0.880, Change on Adj: -0.012: 3%|▎ | 28/1000 [00:05<01:26, 11.30it/s]

Training loss: 0.868, Change on Adj: -0.012: 3%|▎ | 28/1000 [00:05<01:26, 11.30it/s]

Training loss: 0.868, Change on Adj: -0.012: 3%|▎ | 30/1000 [00:05<01:24, 11.43it/s]

Training loss: 0.856, Change on Adj: -0.012: 3%|▎ | 30/1000 [00:05<01:24, 11.43it/s]

Training loss: 0.858, Change on Adj: -0.012: 3%|▎ | 30/1000 [00:05<01:24, 11.43it/s]

Training loss: 0.858, Change on Adj: -0.012: 3%|▎ | 32/1000 [00:05<01:23, 11.53it/s]

Training loss: 0.850, Change on Adj: -0.012: 3%|▎ | 32/1000 [00:05<01:23, 11.53it/s]

Training loss: 0.853, Change on Adj: -0.012: 3%|▎ | 32/1000 [00:05<01:23, 11.53it/s]

Training loss: 0.853, Change on Adj: -0.012: 3%|▎ | 34/1000 [00:05<01:23, 11.59it/s]

Training loss: 0.850, Change on Adj: -0.012: 3%|▎ | 34/1000 [00:06<01:23, 11.59it/s]

Training loss: 0.830, Change on Adj: -0.012: 3%|▎ | 34/1000 [00:06<01:23, 11.59it/s]

Training loss: 0.830, Change on Adj: -0.012: 4%|▎ | 36/1000 [00:06<01:22, 11.64it/s]

Training loss: 0.821, Change on Adj: -0.012: 4%|▎ | 36/1000 [00:06<01:22, 11.64it/s]

Training loss: 0.814, Change on Adj: -0.012: 4%|▎ | 36/1000 [00:06<01:22, 11.64it/s]

Training loss: 0.814, Change on Adj: -0.012: 4%|▍ | 38/1000 [00:06<01:22, 11.67it/s]

Training loss: 0.815, Change on Adj: -0.012: 4%|▍ | 38/1000 [00:06<01:22, 11.67it/s]

Training loss: 0.806, Change on Adj: -0.012: 4%|▍ | 38/1000 [00:06<01:22, 11.67it/s]

Training loss: 0.806, Change on Adj: -0.012: 4%|▍ | 40/1000 [00:06<01:22, 11.69it/s]

Training loss: 0.789, Change on Adj: -0.012: 4%|▍ | 40/1000 [00:06<01:22, 11.69it/s]

Training loss: 0.798, Change on Adj: -0.012: 4%|▍ | 40/1000 [00:06<01:22, 11.69it/s]

Training loss: 0.798, Change on Adj: -0.012: 4%|▍ | 42/1000 [00:06<01:21, 11.71it/s]

Training loss: 0.787, Change on Adj: -0.012: 4%|▍ | 42/1000 [00:06<01:21, 11.71it/s]

Training loss: 0.772, Change on Adj: -0.012: 4%|▍ | 42/1000 [00:06<01:21, 11.71it/s]

Training loss: 0.772, Change on Adj: -0.012: 4%|▍ | 44/1000 [00:06<01:21, 11.74it/s]

Training loss: 0.780, Change on Adj: -0.012: 4%|▍ | 44/1000 [00:06<01:21, 11.74it/s]

Training loss: 0.775, Change on Adj: -0.012: 4%|▍ | 44/1000 [00:06<01:21, 11.74it/s]

Training loss: 0.775, Change on Adj: -0.012: 5%|▍ | 46/1000 [00:06<01:21, 11.75it/s]

Training loss: 0.763, Change on Adj: -0.012: 5%|▍ | 46/1000 [00:07<01:21, 11.75it/s]

Training loss: 0.754, Change on Adj: -0.012: 5%|▍ | 46/1000 [00:07<01:21, 11.75it/s]

Training loss: 0.754, Change on Adj: -0.012: 5%|▍ | 48/1000 [00:07<01:20, 11.76it/s]

Training loss: 0.745, Change on Adj: -0.012: 5%|▍ | 48/1000 [00:07<01:20, 11.76it/s]

Training loss: 0.744, Change on Adj: -0.012: 5%|▍ | 48/1000 [00:07<01:20, 11.76it/s]

Training loss: 0.744, Change on Adj: -0.012: 5%|▌ | 50/1000 [00:07<01:20, 11.77it/s]

Training loss: 0.732, Change on Adj: -0.012: 5%|▌ | 50/1000 [00:07<01:20, 11.77it/s]

Training loss: 0.721, Change on Adj: -0.012: 5%|▌ | 50/1000 [00:07<01:20, 11.77it/s]

Training loss: 0.721, Change on Adj: -0.012: 5%|▌ | 52/1000 [00:07<01:20, 11.76it/s]

Training loss: 0.712, Change on Adj: -0.012: 5%|▌ | 52/1000 [00:07<01:20, 11.76it/s]

Training loss: 0.716, Change on Adj: -0.012: 5%|▌ | 52/1000 [00:07<01:20, 11.76it/s]

Training loss: 0.716, Change on Adj: -0.012: 5%|▌ | 54/1000 [00:07<01:20, 11.76it/s]

Training loss: 0.719, Change on Adj: -0.012: 5%|▌ | 54/1000 [00:07<01:20, 11.76it/s]

Training loss: 0.717, Change on Adj: -0.012: 5%|▌ | 54/1000 [00:07<01:20, 11.76it/s]

Training loss: 0.717, Change on Adj: -0.012: 6%|▌ | 56/1000 [00:07<01:20, 11.78it/s]

Training loss: 0.699, Change on Adj: -0.012: 6%|▌ | 56/1000 [00:07<01:20, 11.78it/s]

Training loss: 0.678, Change on Adj: -0.012: 6%|▌ | 56/1000 [00:08<01:20, 11.78it/s]

Training loss: 0.678, Change on Adj: -0.012: 6%|▌ | 58/1000 [00:08<01:19, 11.78it/s]

Training loss: 0.666, Change on Adj: -0.012: 6%|▌ | 58/1000 [00:08<01:19, 11.78it/s]

Training loss: 0.674, Change on Adj: -0.012: 6%|▌ | 58/1000 [00:08<01:19, 11.78it/s]

Training loss: 0.674, Change on Adj: -0.012: 6%|▌ | 60/1000 [00:08<01:19, 11.79it/s]

Training loss: 0.666, Change on Adj: -0.012: 6%|▌ | 60/1000 [00:08<01:19, 11.79it/s]

Training loss: 0.670, Change on Adj: -0.012: 6%|▌ | 60/1000 [00:08<01:19, 11.79it/s]

Training loss: 0.670, Change on Adj: -0.012: 6%|▌ | 62/1000 [00:08<01:19, 11.80it/s]

Training loss: 0.685, Change on Adj: -0.012: 6%|▌ | 62/1000 [00:08<01:19, 11.80it/s]

Training loss: 0.652, Change on Adj: -0.012: 6%|▌ | 62/1000 [00:08<01:19, 11.80it/s]

Training loss: 0.652, Change on Adj: -0.012: 6%|▋ | 64/1000 [00:08<01:19, 11.81it/s]

Training loss: 0.636, Change on Adj: -0.012: 6%|▋ | 64/1000 [00:08<01:19, 11.81it/s]

Training loss: 0.636, Change on Adj: -0.012: 6%|▋ | 64/1000 [00:08<01:19, 11.81it/s]

Training loss: 0.636, Change on Adj: -0.012: 7%|▋ | 66/1000 [00:08<01:19, 11.81it/s]

Training loss: 0.654, Change on Adj: -0.012: 7%|▋ | 66/1000 [00:08<01:19, 11.81it/s]

Training loss: 0.611, Change on Adj: -0.012: 7%|▋ | 66/1000 [00:08<01:19, 11.81it/s]

Training loss: 0.611, Change on Adj: -0.012: 7%|▋ | 68/1000 [00:08<01:18, 11.81it/s]

Training loss: 0.608, Change on Adj: -0.012: 7%|▋ | 68/1000 [00:08<01:18, 11.81it/s]

Training loss: 0.623, Change on Adj: -0.012: 7%|▋ | 68/1000 [00:09<01:18, 11.81it/s]

Training loss: 0.623, Change on Adj: -0.012: 7%|▋ | 70/1000 [00:09<01:18, 11.81it/s]

Training loss: 0.585, Change on Adj: -0.012: 7%|▋ | 70/1000 [00:09<01:18, 11.81it/s]

Training loss: 0.600, Change on Adj: -0.012: 7%|▋ | 70/1000 [00:09<01:18, 11.81it/s]

Training loss: 0.600, Change on Adj: -0.012: 7%|▋ | 72/1000 [00:09<01:18, 11.82it/s]

Training loss: 0.585, Change on Adj: -0.012: 7%|▋ | 72/1000 [00:09<01:18, 11.82it/s]

Training loss: 0.586, Change on Adj: -0.012: 7%|▋ | 72/1000 [00:09<01:18, 11.82it/s]

Training loss: 0.586, Change on Adj: -0.012: 7%|▋ | 74/1000 [00:09<01:18, 11.82it/s]

Training loss: 0.568, Change on Adj: -0.012: 7%|▋ | 74/1000 [00:09<01:18, 11.82it/s]

Training loss: 0.568, Change on Adj: -0.012: 7%|▋ | 74/1000 [00:09<01:18, 11.82it/s]

Training loss: 0.568, Change on Adj: -0.012: 8%|▊ | 76/1000 [00:09<01:18, 11.83it/s]

Training loss: 0.546, Change on Adj: -0.012: 8%|▊ | 76/1000 [00:09<01:18, 11.83it/s]

Training loss: 0.569, Change on Adj: -0.012: 8%|▊ | 76/1000 [00:09<01:18, 11.83it/s]

Training loss: 0.569, Change on Adj: -0.012: 8%|▊ | 78/1000 [00:09<01:17, 11.82it/s]

Training loss: 0.563, Change on Adj: -0.012: 8%|▊ | 78/1000 [00:09<01:17, 11.82it/s]

Training loss: 0.536, Change on Adj: -0.012: 8%|▊ | 78/1000 [00:09<01:17, 11.82it/s]

Training loss: 0.536, Change on Adj: -0.012: 8%|▊ | 80/1000 [00:09<01:17, 11.83it/s]

Training loss: 0.538, Change on Adj: -0.012: 8%|▊ | 80/1000 [00:09<01:17, 11.83it/s]

Training loss: 0.527, Change on Adj: -0.012: 8%|▊ | 80/1000 [00:10<01:17, 11.83it/s]

Training loss: 0.527, Change on Adj: -0.012: 8%|▊ | 82/1000 [00:10<01:17, 11.82it/s]

Training loss: 0.536, Change on Adj: -0.012: 8%|▊ | 82/1000 [00:10<01:17, 11.82it/s]

Training loss: 0.527, Change on Adj: -0.012: 8%|▊ | 82/1000 [00:10<01:17, 11.82it/s]

Training loss: 0.527, Change on Adj: -0.012: 8%|▊ | 84/1000 [00:10<01:17, 11.82it/s]

Training loss: 0.501, Change on Adj: -0.012: 8%|▊ | 84/1000 [00:10<01:17, 11.82it/s]

Training loss: 0.506, Change on Adj: -0.012: 8%|▊ | 84/1000 [00:10<01:17, 11.82it/s]

Training loss: 0.506, Change on Adj: -0.012: 9%|▊ | 86/1000 [00:10<01:17, 11.83it/s]

Training loss: 0.493, Change on Adj: -0.012: 9%|▊ | 86/1000 [00:10<01:17, 11.83it/s]

Training loss: 0.513, Change on Adj: -0.012: 9%|▊ | 86/1000 [00:10<01:17, 11.83it/s]

Training loss: 0.513, Change on Adj: -0.012: 9%|▉ | 88/1000 [00:10<01:17, 11.80it/s]

Training loss: 0.522, Change on Adj: -0.012: 9%|▉ | 88/1000 [00:10<01:17, 11.80it/s]

Training loss: 0.496, Change on Adj: -0.012: 9%|▉ | 88/1000 [00:10<01:17, 11.80it/s]

Training loss: 0.496, Change on Adj: -0.012: 9%|▉ | 90/1000 [00:10<01:17, 11.74it/s]

Training loss: 0.507, Change on Adj: -0.012: 9%|▉ | 90/1000 [00:10<01:17, 11.74it/s]

Training loss: 0.467, Change on Adj: -0.012: 9%|▉ | 90/1000 [00:10<01:17, 11.74it/s]

Training loss: 0.467, Change on Adj: -0.012: 9%|▉ | 92/1000 [00:10<01:17, 11.76it/s]

Training loss: 0.446, Change on Adj: -0.012: 9%|▉ | 92/1000 [00:10<01:17, 11.76it/s]

Training loss: 0.437, Change on Adj: -0.012: 9%|▉ | 92/1000 [00:11<01:17, 11.76it/s]

Training loss: 0.437, Change on Adj: -0.012: 9%|▉ | 94/1000 [00:11<01:16, 11.78it/s]

Training loss: 0.442, Change on Adj: -0.012: 9%|▉ | 94/1000 [00:11<01:16, 11.78it/s]

Training loss: 0.478, Change on Adj: -0.012: 9%|▉ | 94/1000 [00:11<01:16, 11.78it/s]

Training loss: 0.478, Change on Adj: -0.012: 10%|▉ | 96/1000 [00:11<01:16, 11.76it/s]

Training loss: 0.440, Change on Adj: -0.012: 10%|▉ | 96/1000 [00:11<01:16, 11.76it/s]

Training loss: 0.451, Change on Adj: -0.013: 10%|▉ | 96/1000 [00:11<01:16, 11.76it/s]

Training loss: 0.451, Change on Adj: -0.013: 10%|▉ | 98/1000 [00:11<01:16, 11.75it/s]

Training loss: 0.450, Change on Adj: -0.012: 10%|▉ | 98/1000 [00:11<01:16, 11.75it/s]

Training loss: 0.416, Change on Adj: -0.013: 10%|▉ | 98/1000 [00:11<01:16, 11.75it/s]

Training loss: 0.416, Change on Adj: -0.013: 10%|█ | 100/1000 [00:11<01:16, 11.75it/s]

Training loss: 0.412, Change on Adj: -0.013: 10%|█ | 100/1000 [00:11<01:16, 11.75it/s]

Training loss: 0.400, Change on Adj: -0.012: 10%|█ | 100/1000 [00:11<01:16, 11.75it/s]

Training loss: 0.400, Change on Adj: -0.012: 10%|█ | 102/1000 [00:11<01:16, 11.76it/s]

Training loss: 0.411, Change on Adj: -0.012: 10%|█ | 102/1000 [00:11<01:16, 11.76it/s]

Training loss: 0.398, Change on Adj: -0.013: 10%|█ | 102/1000 [00:11<01:16, 11.76it/s]

Training loss: 0.398, Change on Adj: -0.013: 10%|█ | 104/1000 [00:11<01:16, 11.78it/s]

Training loss: 0.421, Change on Adj: -0.013: 10%|█ | 104/1000 [00:11<01:16, 11.78it/s]

Training loss: 0.372, Change on Adj: -0.013: 10%|█ | 104/1000 [00:12<01:16, 11.78it/s]

Training loss: 0.372, Change on Adj: -0.013: 11%|█ | 106/1000 [00:12<01:16, 11.66it/s]

Training loss: 0.416, Change on Adj: -0.012: 11%|█ | 106/1000 [00:12<01:16, 11.66it/s]

Training loss: 0.383, Change on Adj: -0.012: 11%|█ | 106/1000 [00:12<01:16, 11.66it/s]

Training loss: 0.383, Change on Adj: -0.012: 11%|█ | 108/1000 [00:12<01:17, 11.57it/s]

Training loss: 0.396, Change on Adj: -0.012: 11%|█ | 108/1000 [00:12<01:17, 11.57it/s]

Training loss: 0.347, Change on Adj: -0.012: 11%|█ | 108/1000 [00:12<01:17, 11.57it/s]

Training loss: 0.347, Change on Adj: -0.012: 11%|█ | 110/1000 [00:12<01:16, 11.63it/s]

Training loss: 0.368, Change on Adj: -0.012: 11%|█ | 110/1000 [00:12<01:16, 11.63it/s]

Training loss: 0.373, Change on Adj: -0.012: 11%|█ | 110/1000 [00:12<01:16, 11.63it/s]

Training loss: 0.373, Change on Adj: -0.012: 11%|█ | 112/1000 [00:12<01:16, 11.58it/s]

Training loss: 0.395, Change on Adj: -0.012: 11%|█ | 112/1000 [00:12<01:16, 11.58it/s]

Training loss: 0.387, Change on Adj: -0.012: 11%|█ | 112/1000 [00:12<01:16, 11.58it/s]

Training loss: 0.387, Change on Adj: -0.012: 11%|█▏ | 114/1000 [00:12<01:16, 11.65it/s]

Training loss: 0.330, Change on Adj: -0.012: 11%|█▏ | 114/1000 [00:12<01:16, 11.65it/s]

Training loss: 0.342, Change on Adj: -0.012: 11%|█▏ | 114/1000 [00:12<01:16, 11.65it/s]

Training loss: 0.342, Change on Adj: -0.012: 12%|█▏ | 116/1000 [00:12<01:15, 11.71it/s]

Training loss: 0.331, Change on Adj: -0.012: 12%|█▏ | 116/1000 [00:13<01:15, 11.71it/s]

Training loss: 0.335, Change on Adj: -0.012: 12%|█▏ | 116/1000 [00:13<01:15, 11.71it/s]

Training loss: 0.335, Change on Adj: -0.012: 12%|█▏ | 118/1000 [00:13<01:15, 11.74it/s]

Training loss: 0.320, Change on Adj: -0.012: 12%|█▏ | 118/1000 [00:13<01:15, 11.74it/s]

Training loss: 0.355, Change on Adj: -0.012: 12%|█▏ | 118/1000 [00:13<01:15, 11.74it/s]

Training loss: 0.355, Change on Adj: -0.012: 12%|█▏ | 120/1000 [00:13<01:14, 11.76it/s]

Training loss: 0.308, Change on Adj: -0.012: 12%|█▏ | 120/1000 [00:13<01:14, 11.76it/s]

Training loss: 0.306, Change on Adj: -0.012: 12%|█▏ | 120/1000 [00:13<01:14, 11.76it/s]

Training loss: 0.306, Change on Adj: -0.012: 12%|█▏ | 122/1000 [00:13<01:14, 11.79it/s]

Training loss: 0.331, Change on Adj: -0.012: 12%|█▏ | 122/1000 [00:13<01:14, 11.79it/s]

Training loss: 0.308, Change on Adj: -0.012: 12%|█▏ | 122/1000 [00:13<01:14, 11.79it/s]

Training loss: 0.308, Change on Adj: -0.012: 12%|█▏ | 124/1000 [00:13<01:14, 11.80it/s]

Training loss: 0.309, Change on Adj: -0.012: 12%|█▏ | 124/1000 [00:13<01:14, 11.80it/s]

Training loss: 0.290, Change on Adj: -0.012: 12%|█▏ | 124/1000 [00:13<01:14, 11.80it/s]

Training loss: 0.290, Change on Adj: -0.012: 13%|█▎ | 126/1000 [00:13<01:13, 11.81it/s]

Training loss: 0.330, Change on Adj: -0.012: 13%|█▎ | 126/1000 [00:13<01:13, 11.81it/s]

Training loss: 0.271, Change on Adj: -0.012: 13%|█▎ | 126/1000 [00:13<01:13, 11.81it/s]

Training loss: 0.271, Change on Adj: -0.012: 13%|█▎ | 128/1000 [00:13<01:16, 11.37it/s]

Training loss: 0.304, Change on Adj: -0.012: 13%|█▎ | 128/1000 [00:14<01:16, 11.37it/s]

Training loss: 0.305, Change on Adj: -0.012: 13%|█▎ | 128/1000 [00:14<01:16, 11.37it/s]

Training loss: 0.305, Change on Adj: -0.012: 13%|█▎ | 130/1000 [00:14<01:22, 10.53it/s]

Training loss: 0.301, Change on Adj: -0.012: 13%|█▎ | 130/1000 [00:14<01:22, 10.53it/s]

Training loss: 0.304, Change on Adj: -0.012: 13%|█▎ | 130/1000 [00:14<01:22, 10.53it/s]

Training loss: 0.304, Change on Adj: -0.012: 13%|█▎ | 132/1000 [00:14<01:24, 10.30it/s]

Training loss: 0.297, Change on Adj: -0.012: 13%|█▎ | 132/1000 [00:14<01:24, 10.30it/s]

Training loss: 0.278, Change on Adj: -0.012: 13%|█▎ | 132/1000 [00:14<01:24, 10.30it/s]

Training loss: 0.278, Change on Adj: -0.012: 13%|█▎ | 134/1000 [00:14<01:23, 10.31it/s]

Training loss: 0.283, Change on Adj: -0.012: 13%|█▎ | 134/1000 [00:14<01:23, 10.31it/s]

Training loss: 0.277, Change on Adj: -0.012: 13%|█▎ | 134/1000 [00:14<01:23, 10.31it/s]

Training loss: 0.277, Change on Adj: -0.012: 14%|█▎ | 136/1000 [00:14<01:24, 10.17it/s]

Training loss: 0.296, Change on Adj: -0.012: 14%|█▎ | 136/1000 [00:14<01:24, 10.17it/s]

Training loss: 0.342, Change on Adj: -0.012: 14%|█▎ | 136/1000 [00:14<01:24, 10.17it/s]

Training loss: 0.342, Change on Adj: -0.012: 14%|█▍ | 138/1000 [00:14<01:23, 10.26it/s]

Training loss: 0.271, Change on Adj: -0.012: 14%|█▍ | 138/1000 [00:15<01:23, 10.26it/s]

Training loss: 0.288, Change on Adj: -0.012: 14%|█▍ | 138/1000 [00:15<01:23, 10.26it/s]

Training loss: 0.288, Change on Adj: -0.012: 14%|█▍ | 140/1000 [00:15<01:24, 10.20it/s]

Training loss: 0.266, Change on Adj: -0.012: 14%|█▍ | 140/1000 [00:15<01:24, 10.20it/s]

Training loss: 0.231, Change on Adj: -0.012: 14%|█▍ | 140/1000 [00:15<01:24, 10.20it/s]

Training loss: 0.231, Change on Adj: -0.012: 14%|█▍ | 142/1000 [00:15<01:25, 9.99it/s]

Training loss: 0.252, Change on Adj: -0.011: 14%|█▍ | 142/1000 [00:15<01:25, 9.99it/s]

Training loss: 0.325, Change on Adj: -0.011: 14%|█▍ | 142/1000 [00:15<01:25, 9.99it/s]

Training loss: 0.325, Change on Adj: -0.011: 14%|█▍ | 144/1000 [00:15<01:22, 10.32it/s]

Training loss: 0.258, Change on Adj: -0.011: 14%|█▍ | 144/1000 [00:15<01:22, 10.32it/s]

Training loss: 0.285, Change on Adj: -0.012: 14%|█▍ | 144/1000 [00:15<01:22, 10.32it/s]

Training loss: 0.285, Change on Adj: -0.012: 15%|█▍ | 146/1000 [00:15<01:19, 10.73it/s]

Training loss: 0.298, Change on Adj: -0.012: 15%|█▍ | 146/1000 [00:15<01:19, 10.73it/s]

Training loss: 0.284, Change on Adj: -0.012: 15%|█▍ | 146/1000 [00:15<01:19, 10.73it/s]

Training loss: 0.284, Change on Adj: -0.012: 15%|█▍ | 148/1000 [00:15<01:17, 11.04it/s]

Training loss: 0.327, Change on Adj: -0.012: 15%|█▍ | 148/1000 [00:15<01:17, 11.04it/s]

Training loss: 0.274, Change on Adj: -0.012: 15%|█▍ | 148/1000 [00:16<01:17, 11.04it/s]

Training loss: 0.274, Change on Adj: -0.012: 15%|█▌ | 150/1000 [00:16<01:15, 11.27it/s]

Training loss: 0.241, Change on Adj: -0.012: 15%|█▌ | 150/1000 [00:16<01:15, 11.27it/s]

Training loss: 0.286, Change on Adj: -0.012: 15%|█▌ | 150/1000 [00:16<01:15, 11.27it/s]

Training loss: 0.286, Change on Adj: -0.012: 15%|█▌ | 152/1000 [00:16<01:14, 11.43it/s]

Training loss: 0.270, Change on Adj: -0.012: 15%|█▌ | 152/1000 [00:16<01:14, 11.43it/s]

Training loss: 0.303, Change on Adj: -0.012: 15%|█▌ | 152/1000 [00:16<01:14, 11.43it/s]

Training loss: 0.303, Change on Adj: -0.012: 15%|█▌ | 154/1000 [00:16<01:13, 11.55it/s]

Training loss: 0.326, Change on Adj: -0.012: 15%|█▌ | 154/1000 [00:16<01:13, 11.55it/s]

Training loss: 0.216, Change on Adj: -0.012: 15%|█▌ | 154/1000 [00:16<01:13, 11.55it/s]

Training loss: 0.216, Change on Adj: -0.012: 16%|█▌ | 156/1000 [00:16<01:12, 11.63it/s]

Training loss: 0.315, Change on Adj: -0.011: 16%|█▌ | 156/1000 [00:16<01:12, 11.63it/s]

Training loss: 0.254, Change on Adj: -0.011: 16%|█▌ | 156/1000 [00:16<01:12, 11.63it/s]

Training loss: 0.254, Change on Adj: -0.011: 16%|█▌ | 158/1000 [00:16<01:11, 11.70it/s]

Training loss: 0.243, Change on Adj: -0.011: 16%|█▌ | 158/1000 [00:16<01:11, 11.70it/s]

Training loss: 0.231, Change on Adj: -0.011: 16%|█▌ | 158/1000 [00:16<01:11, 11.70it/s]

Training loss: 0.231, Change on Adj: -0.011: 16%|█▌ | 160/1000 [00:16<01:11, 11.75it/s]

Training loss: 0.255, Change on Adj: -0.011: 16%|█▌ | 160/1000 [00:17<01:11, 11.75it/s]

Training loss: 0.265, Change on Adj: -0.011: 16%|█▌ | 160/1000 [00:17<01:11, 11.75it/s]

Training loss: 0.265, Change on Adj: -0.011: 16%|█▌ | 162/1000 [00:17<01:11, 11.78it/s]

Training loss: 0.298, Change on Adj: -0.011: 16%|█▌ | 162/1000 [00:17<01:11, 11.78it/s]

Training loss: 0.273, Change on Adj: -0.011: 16%|█▌ | 162/1000 [00:17<01:11, 11.78it/s]

Training loss: 0.273, Change on Adj: -0.011: 16%|█▋ | 164/1000 [00:17<01:10, 11.80it/s]

Training loss: 0.288, Change on Adj: -0.011: 16%|█▋ | 164/1000 [00:17<01:10, 11.80it/s]

Training loss: 0.291, Change on Adj: -0.011: 16%|█▋ | 164/1000 [00:17<01:10, 11.80it/s]

Training loss: 0.291, Change on Adj: -0.011: 17%|█▋ | 166/1000 [00:17<01:10, 11.82it/s]

Training loss: 0.276, Change on Adj: -0.011: 17%|█▋ | 166/1000 [00:17<01:10, 11.82it/s]

Training loss: 0.217, Change on Adj: -0.011: 17%|█▋ | 166/1000 [00:17<01:10, 11.82it/s]

Training loss: 0.217, Change on Adj: -0.011: 17%|█▋ | 168/1000 [00:17<01:10, 11.83it/s]

Training loss: 0.312, Change on Adj: -0.011: 17%|█▋ | 168/1000 [00:17<01:10, 11.83it/s]

Training loss: 0.247, Change on Adj: -0.011: 17%|█▋ | 168/1000 [00:17<01:10, 11.83it/s]

Training loss: 0.247, Change on Adj: -0.011: 17%|█▋ | 170/1000 [00:17<01:10, 11.84it/s]

Training loss: 0.251, Change on Adj: -0.011: 17%|█▋ | 170/1000 [00:17<01:10, 11.84it/s]

Training loss: 0.258, Change on Adj: -0.011: 17%|█▋ | 170/1000 [00:17<01:10, 11.84it/s]

Training loss: 0.258, Change on Adj: -0.011: 17%|█▋ | 172/1000 [00:17<01:09, 11.85it/s]

Training loss: 0.262, Change on Adj: -0.010: 17%|█▋ | 172/1000 [00:18<01:09, 11.85it/s]

Training loss: 0.317, Change on Adj: -0.011: 17%|█▋ | 172/1000 [00:18<01:09, 11.85it/s]

Training loss: 0.317, Change on Adj: -0.011: 17%|█▋ | 174/1000 [00:18<01:09, 11.84it/s]

Training loss: 0.233, Change on Adj: -0.011: 17%|█▋ | 174/1000 [00:18<01:09, 11.84it/s]

Training loss: 0.287, Change on Adj: -0.011: 17%|█▋ | 174/1000 [00:18<01:09, 11.84it/s]

Training loss: 0.287, Change on Adj: -0.011: 18%|█▊ | 176/1000 [00:18<01:11, 11.55it/s]

Training loss: 0.258, Change on Adj: -0.010: 18%|█▊ | 176/1000 [00:18<01:11, 11.55it/s]

Training loss: 0.250, Change on Adj: -0.010: 18%|█▊ | 176/1000 [00:18<01:11, 11.55it/s]

Training loss: 0.250, Change on Adj: -0.010: 18%|█▊ | 178/1000 [00:18<01:14, 11.00it/s]

Training loss: 0.210, Change on Adj: -0.011: 18%|█▊ | 178/1000 [00:18<01:14, 11.00it/s]

Training loss: 0.259, Change on Adj: -0.011: 18%|█▊ | 178/1000 [00:18<01:14, 11.00it/s]

Training loss: 0.259, Change on Adj: -0.011: 18%|█▊ | 180/1000 [00:18<01:17, 10.63it/s]

Training loss: 0.244, Change on Adj: -0.011: 18%|█▊ | 180/1000 [00:18<01:17, 10.63it/s]

Training loss: 0.237, Change on Adj: -0.010: 18%|█▊ | 180/1000 [00:18<01:17, 10.63it/s]

Training loss: 0.237, Change on Adj: -0.010: 18%|█▊ | 182/1000 [00:18<01:16, 10.63it/s]

Training loss: 0.243, Change on Adj: -0.010: 18%|█▊ | 182/1000 [00:18<01:16, 10.63it/s]

Training loss: 0.238, Change on Adj: -0.010: 18%|█▊ | 182/1000 [00:19<01:16, 10.63it/s]

Training loss: 0.238, Change on Adj: -0.010: 18%|█▊ | 184/1000 [00:19<01:18, 10.38it/s]

Training loss: 0.224, Change on Adj: -0.010: 18%|█▊ | 184/1000 [00:19<01:18, 10.38it/s]

Training loss: 0.275, Change on Adj: -0.010: 18%|█▊ | 184/1000 [00:19<01:18, 10.38it/s]

Training loss: 0.275, Change on Adj: -0.010: 19%|█▊ | 186/1000 [00:19<01:19, 10.21it/s]

Training loss: 0.314, Change on Adj: -0.010: 19%|█▊ | 186/1000 [00:19<01:19, 10.21it/s]

Training loss: 0.250, Change on Adj: -0.010: 19%|█▊ | 186/1000 [00:19<01:19, 10.21it/s]

Training loss: 0.250, Change on Adj: -0.010: 19%|█▉ | 188/1000 [00:19<01:20, 10.10it/s]

Training loss: 0.211, Change on Adj: -0.010: 19%|█▉ | 188/1000 [00:19<01:20, 10.10it/s]

Training loss: 0.253, Change on Adj: -0.010: 19%|█▉ | 188/1000 [00:19<01:20, 10.10it/s]

Training loss: 0.253, Change on Adj: -0.010: 19%|█▉ | 190/1000 [00:19<01:20, 10.02it/s]

Training loss: 0.260, Change on Adj: -0.010: 19%|█▉ | 190/1000 [00:19<01:20, 10.02it/s]

Training loss: 0.284, Change on Adj: -0.010: 19%|█▉ | 190/1000 [00:19<01:20, 10.02it/s]

Training loss: 0.284, Change on Adj: -0.010: 19%|█▉ | 192/1000 [00:19<01:21, 9.96it/s]

Training loss: 0.276, Change on Adj: -0.010: 19%|█▉ | 192/1000 [00:19<01:21, 9.96it/s]

Training loss: 0.276, Change on Adj: -0.010: 19%|█▉ | 193/1000 [00:20<01:21, 9.94it/s]

Training loss: 0.242, Change on Adj: -0.010: 19%|█▉ | 193/1000 [00:20<01:21, 9.94it/s]

Training loss: 0.242, Change on Adj: -0.010: 19%|█▉ | 194/1000 [00:20<01:21, 9.92it/s]

Training loss: 0.246, Change on Adj: -0.009: 19%|█▉ | 194/1000 [00:20<01:21, 9.92it/s]

Training loss: 0.246, Change on Adj: -0.009: 20%|█▉ | 195/1000 [00:20<01:21, 9.89it/s]

Training loss: 0.212, Change on Adj: -0.009: 20%|█▉ | 195/1000 [00:20<01:21, 9.89it/s]

Training loss: 0.212, Change on Adj: -0.009: 20%|█▉ | 196/1000 [00:20<01:21, 9.87it/s]

Training loss: 0.267, Change on Adj: -0.009: 20%|█▉ | 196/1000 [00:20<01:21, 9.87it/s]

Training loss: 0.267, Change on Adj: -0.009: 20%|█▉ | 197/1000 [00:20<01:21, 9.85it/s]

Training loss: 0.210, Change on Adj: -0.009: 20%|█▉ | 197/1000 [00:20<01:21, 9.85it/s]

Training loss: 0.210, Change on Adj: -0.009: 20%|█▉ | 198/1000 [00:20<01:21, 9.84it/s]

Training loss: 0.220, Change on Adj: -0.009: 20%|█▉ | 198/1000 [00:20<01:21, 9.84it/s]

Training loss: 0.220, Change on Adj: -0.009: 20%|█▉ | 199/1000 [00:20<01:21, 9.83it/s]

Training loss: 0.244, Change on Adj: -0.009: 20%|█▉ | 199/1000 [00:20<01:21, 9.83it/s]

Training loss: 0.244, Change on Adj: -0.009: 20%|██ | 200/1000 [00:20<01:21, 9.83it/s]

Training loss: 0.271, Change on Adj: -0.009: 20%|██ | 200/1000 [00:20<01:21, 9.83it/s]

Training loss: 0.271, Change on Adj: -0.009: 20%|██ | 201/1000 [00:20<01:21, 9.81it/s]

Training loss: 0.228, Change on Adj: -0.009: 20%|██ | 201/1000 [00:20<01:21, 9.81it/s]

Training loss: 0.228, Change on Adj: -0.009: 20%|██ | 202/1000 [00:20<01:21, 9.81it/s]

Training loss: 0.246, Change on Adj: -0.009: 20%|██ | 202/1000 [00:21<01:21, 9.81it/s]

Training loss: 0.246, Change on Adj: -0.009: 20%|██ | 203/1000 [00:21<01:21, 9.80it/s]

Training loss: 0.215, Change on Adj: -0.009: 20%|██ | 203/1000 [00:21<01:21, 9.80it/s]

Training loss: 0.215, Change on Adj: -0.009: 20%|██ | 204/1000 [00:21<01:21, 9.79it/s]

Training loss: 0.246, Change on Adj: -0.009: 20%|██ | 204/1000 [00:21<01:21, 9.79it/s]

Training loss: 0.246, Change on Adj: -0.009: 20%|██ | 205/1000 [00:21<01:21, 9.78it/s]

Training loss: 0.246, Change on Adj: -0.009: 20%|██ | 205/1000 [00:21<01:21, 9.78it/s]

Training loss: 0.246, Change on Adj: -0.009: 21%|██ | 206/1000 [00:21<01:21, 9.78it/s]

Training loss: 0.289, Change on Adj: -0.009: 21%|██ | 206/1000 [00:21<01:21, 9.78it/s]

Training loss: 0.289, Change on Adj: -0.009: 21%|██ | 207/1000 [00:21<01:21, 9.78it/s]

Training loss: 0.238, Change on Adj: -0.009: 21%|██ | 207/1000 [00:21<01:21, 9.78it/s]

Training loss: 0.246, Change on Adj: -0.009: 21%|██ | 207/1000 [00:21<01:21, 9.78it/s]

Training loss: 0.246, Change on Adj: -0.009: 21%|██ | 209/1000 [00:21<01:14, 10.63it/s]

Training loss: 0.240, Change on Adj: -0.009: 21%|██ | 209/1000 [00:21<01:14, 10.63it/s]

Training loss: 0.271, Change on Adj: -0.009: 21%|██ | 209/1000 [00:21<01:14, 10.63it/s]

Training loss: 0.271, Change on Adj: -0.009: 21%|██ | 211/1000 [00:21<01:11, 11.09it/s]

Training loss: 0.202, Change on Adj: -0.009: 21%|██ | 211/1000 [00:21<01:11, 11.09it/s]

Training loss: 0.276, Change on Adj: -0.009: 21%|██ | 211/1000 [00:21<01:11, 11.09it/s]

Training loss: 0.276, Change on Adj: -0.009: 21%|██▏ | 213/1000 [00:21<01:09, 11.35it/s]

Training loss: 0.234, Change on Adj: -0.009: 21%|██▏ | 213/1000 [00:22<01:09, 11.35it/s]

Training loss: 0.199, Change on Adj: -0.009: 21%|██▏ | 213/1000 [00:22<01:09, 11.35it/s]

Training loss: 0.199, Change on Adj: -0.009: 22%|██▏ | 215/1000 [00:22<01:08, 11.51it/s]

Training loss: 0.217, Change on Adj: -0.009: 22%|██▏ | 215/1000 [00:22<01:08, 11.51it/s]

Training loss: 0.213, Change on Adj: -0.009: 22%|██▏ | 215/1000 [00:22<01:08, 11.51it/s]

Training loss: 0.213, Change on Adj: -0.009: 22%|██▏ | 217/1000 [00:22<01:07, 11.62it/s]

Training loss: 0.263, Change on Adj: -0.009: 22%|██▏ | 217/1000 [00:22<01:07, 11.62it/s]

Training loss: 0.223, Change on Adj: -0.008: 22%|██▏ | 217/1000 [00:22<01:07, 11.62it/s]

Training loss: 0.223, Change on Adj: -0.008: 22%|██▏ | 219/1000 [00:22<01:06, 11.69it/s]

Training loss: 0.199, Change on Adj: -0.008: 22%|██▏ | 219/1000 [00:22<01:06, 11.69it/s]

Training loss: 0.231, Change on Adj: -0.008: 22%|██▏ | 219/1000 [00:22<01:06, 11.69it/s]

Training loss: 0.231, Change on Adj: -0.008: 22%|██▏ | 221/1000 [00:22<01:06, 11.65it/s]

Training loss: 0.232, Change on Adj: -0.008: 22%|██▏ | 221/1000 [00:22<01:06, 11.65it/s]

Training loss: 0.284, Change on Adj: -0.009: 22%|██▏ | 221/1000 [00:22<01:06, 11.65it/s]

Training loss: 0.284, Change on Adj: -0.009: 22%|██▏ | 223/1000 [00:22<01:06, 11.71it/s]

Training loss: 0.304, Change on Adj: -0.009: 22%|██▏ | 223/1000 [00:22<01:06, 11.71it/s]

Training loss: 0.217, Change on Adj: -0.009: 22%|██▏ | 223/1000 [00:22<01:06, 11.71it/s]

Training loss: 0.217, Change on Adj: -0.009: 22%|██▎ | 225/1000 [00:22<01:05, 11.75it/s]

Training loss: 0.230, Change on Adj: -0.008: 22%|██▎ | 225/1000 [00:23<01:05, 11.75it/s]

Training loss: 0.239, Change on Adj: -0.008: 22%|██▎ | 225/1000 [00:23<01:05, 11.75it/s]

Training loss: 0.239, Change on Adj: -0.008: 23%|██▎ | 227/1000 [00:23<01:05, 11.79it/s]

Training loss: 0.248, Change on Adj: -0.008: 23%|██▎ | 227/1000 [00:23<01:05, 11.79it/s]

Training loss: 0.243, Change on Adj: -0.008: 23%|██▎ | 227/1000 [00:23<01:05, 11.79it/s]

Training loss: 0.243, Change on Adj: -0.008: 23%|██▎ | 229/1000 [00:23<01:05, 11.82it/s]

Training loss: 0.260, Change on Adj: -0.008: 23%|██▎ | 229/1000 [00:23<01:05, 11.82it/s]

Training loss: 0.270, Change on Adj: -0.008: 23%|██▎ | 229/1000 [00:23<01:05, 11.82it/s]

Training loss: 0.270, Change on Adj: -0.008: 23%|██▎ | 231/1000 [00:23<01:05, 11.82it/s]

Training loss: 0.240, Change on Adj: -0.008: 23%|██▎ | 231/1000 [00:23<01:05, 11.82it/s]

Training loss: 0.246, Change on Adj: -0.008: 23%|██▎ | 231/1000 [00:23<01:05, 11.82it/s]

Training loss: 0.246, Change on Adj: -0.008: 23%|██▎ | 233/1000 [00:23<01:04, 11.84it/s]

Training loss: 0.294, Change on Adj: -0.008: 23%|██▎ | 233/1000 [00:23<01:04, 11.84it/s]

Training loss: 0.244, Change on Adj: -0.008: 23%|██▎ | 233/1000 [00:23<01:04, 11.84it/s]

Training loss: 0.244, Change on Adj: -0.008: 24%|██▎ | 235/1000 [00:23<01:04, 11.84it/s]

Training loss: 0.257, Change on Adj: -0.008: 24%|██▎ | 235/1000 [00:23<01:04, 11.84it/s]

Training loss: 0.255, Change on Adj: -0.008: 24%|██▎ | 235/1000 [00:23<01:04, 11.84it/s]

Training loss: 0.255, Change on Adj: -0.008: 24%|██▎ | 237/1000 [00:23<01:04, 11.74it/s]

Training loss: 0.260, Change on Adj: -0.008: 24%|██▎ | 237/1000 [00:24<01:04, 11.74it/s]

Training loss: 0.273, Change on Adj: -0.008: 24%|██▎ | 237/1000 [00:24<01:04, 11.74it/s]

Training loss: 0.273, Change on Adj: -0.008: 24%|██▍ | 239/1000 [00:24<01:04, 11.77it/s]

Training loss: 0.271, Change on Adj: -0.008: 24%|██▍ | 239/1000 [00:24<01:04, 11.77it/s]

Training loss: 0.249, Change on Adj: -0.008: 24%|██▍ | 239/1000 [00:24<01:04, 11.77it/s]

Training loss: 0.249, Change on Adj: -0.008: 24%|██▍ | 241/1000 [00:24<01:04, 11.80it/s]

Training loss: 0.251, Change on Adj: -0.008: 24%|██▍ | 241/1000 [00:24<01:04, 11.80it/s]

Training loss: 0.211, Change on Adj: -0.008: 24%|██▍ | 241/1000 [00:24<01:04, 11.80it/s]

Training loss: 0.211, Change on Adj: -0.008: 24%|██▍ | 243/1000 [00:24<01:04, 11.81it/s]

Training loss: 0.245, Change on Adj: -0.008: 24%|██▍ | 243/1000 [00:24<01:04, 11.81it/s]

Training loss: 0.266, Change on Adj: -0.008: 24%|██▍ | 243/1000 [00:24<01:04, 11.81it/s]

Training loss: 0.266, Change on Adj: -0.008: 24%|██▍ | 245/1000 [00:24<01:03, 11.82it/s]

Training loss: 0.192, Change on Adj: -0.008: 24%|██▍ | 245/1000 [00:24<01:03, 11.82it/s]

Training loss: 0.230, Change on Adj: -0.008: 24%|██▍ | 245/1000 [00:24<01:03, 11.82it/s]

Training loss: 0.230, Change on Adj: -0.008: 25%|██▍ | 247/1000 [00:24<01:03, 11.84it/s]

Training loss: 0.262, Change on Adj: -0.008: 25%|██▍ | 247/1000 [00:24<01:03, 11.84it/s]

Training loss: 0.222, Change on Adj: -0.008: 25%|██▍ | 247/1000 [00:24<01:03, 11.84it/s]

Training loss: 0.222, Change on Adj: -0.008: 25%|██▍ | 249/1000 [00:24<01:03, 11.84it/s]

Training loss: 0.248, Change on Adj: -0.008: 25%|██▍ | 249/1000 [00:25<01:03, 11.84it/s]

Training loss: 0.290, Change on Adj: -0.008: 25%|██▍ | 249/1000 [00:25<01:03, 11.84it/s]

Training loss: 0.290, Change on Adj: -0.008: 25%|██▌ | 251/1000 [00:25<01:03, 11.85it/s]

Training loss: 0.226, Change on Adj: -0.008: 25%|██▌ | 251/1000 [00:25<01:03, 11.85it/s]

Training loss: 0.250, Change on Adj: -0.008: 25%|██▌ | 251/1000 [00:25<01:03, 11.85it/s]

Training loss: 0.250, Change on Adj: -0.008: 25%|██▌ | 253/1000 [00:25<01:03, 11.85it/s]

Training loss: 0.197, Change on Adj: -0.008: 25%|██▌ | 253/1000 [00:25<01:03, 11.85it/s]

Training loss: 0.251, Change on Adj: -0.008: 25%|██▌ | 253/1000 [00:25<01:03, 11.85it/s]

Training loss: 0.251, Change on Adj: -0.008: 26%|██▌ | 255/1000 [00:25<01:02, 11.83it/s]

Training loss: 0.260, Change on Adj: -0.008: 26%|██▌ | 255/1000 [00:25<01:02, 11.83it/s]

Training loss: 0.259, Change on Adj: -0.007: 26%|██▌ | 255/1000 [00:25<01:02, 11.83it/s]

Training loss: 0.259, Change on Adj: -0.007: 26%|██▌ | 257/1000 [00:25<01:02, 11.84it/s]

Training loss: 0.171, Change on Adj: -0.007: 26%|██▌ | 257/1000 [00:25<01:02, 11.84it/s]

Training loss: 0.222, Change on Adj: -0.007: 26%|██▌ | 257/1000 [00:25<01:02, 11.84it/s]

Training loss: 0.222, Change on Adj: -0.007: 26%|██▌ | 259/1000 [00:25<01:02, 11.83it/s]

Training loss: 0.225, Change on Adj: -0.007: 26%|██▌ | 259/1000 [00:25<01:02, 11.83it/s]

Training loss: 0.203, Change on Adj: -0.007: 26%|██▌ | 259/1000 [00:25<01:02, 11.83it/s]

Training loss: 0.203, Change on Adj: -0.007: 26%|██▌ | 261/1000 [00:25<01:02, 11.85it/s]

Training loss: 0.257, Change on Adj: -0.007: 26%|██▌ | 261/1000 [00:26<01:02, 11.85it/s]

Training loss: 0.233, Change on Adj: -0.007: 26%|██▌ | 261/1000 [00:26<01:02, 11.85it/s]

Training loss: 0.233, Change on Adj: -0.007: 26%|██▋ | 263/1000 [00:26<01:02, 11.85it/s]

Training loss: 0.240, Change on Adj: -0.007: 26%|██▋ | 263/1000 [00:26<01:02, 11.85it/s]

Training loss: 0.259, Change on Adj: -0.007: 26%|██▋ | 263/1000 [00:26<01:02, 11.85it/s]

Training loss: 0.259, Change on Adj: -0.007: 26%|██▋ | 265/1000 [00:26<01:01, 11.86it/s]

Training loss: 0.230, Change on Adj: -0.007: 26%|██▋ | 265/1000 [00:26<01:01, 11.86it/s]

Training loss: 0.224, Change on Adj: -0.007: 26%|██▋ | 265/1000 [00:26<01:01, 11.86it/s]

Training loss: 0.224, Change on Adj: -0.007: 27%|██▋ | 267/1000 [00:26<01:01, 11.87it/s]

Training loss: 0.222, Change on Adj: -0.007: 27%|██▋ | 267/1000 [00:26<01:01, 11.87it/s]

Training loss: 0.270, Change on Adj: -0.007: 27%|██▋ | 267/1000 [00:26<01:01, 11.87it/s]

Training loss: 0.270, Change on Adj: -0.007: 27%|██▋ | 269/1000 [00:26<01:02, 11.71it/s]

Training loss: 0.225, Change on Adj: -0.007: 27%|██▋ | 269/1000 [00:26<01:02, 11.71it/s]

Training loss: 0.225, Change on Adj: -0.007: 27%|██▋ | 269/1000 [00:26<01:02, 11.71it/s]

Training loss: 0.225, Change on Adj: -0.007: 27%|██▋ | 271/1000 [00:26<01:05, 11.06it/s]

Training loss: 0.202, Change on Adj: -0.007: 27%|██▋ | 271/1000 [00:26<01:05, 11.06it/s]

Training loss: 0.252, Change on Adj: -0.007: 27%|██▋ | 271/1000 [00:27<01:05, 11.06it/s]

Training loss: 0.252, Change on Adj: -0.007: 27%|██▋ | 273/1000 [00:27<01:08, 10.56it/s]

Training loss: 0.260, Change on Adj: -0.007: 27%|██▋ | 273/1000 [00:27<01:08, 10.56it/s]

Training loss: 0.227, Change on Adj: -0.007: 27%|██▋ | 273/1000 [00:27<01:08, 10.56it/s]

Training loss: 0.227, Change on Adj: -0.007: 28%|██▊ | 275/1000 [00:27<01:10, 10.28it/s]

Training loss: 0.234, Change on Adj: -0.007: 28%|██▊ | 275/1000 [00:27<01:10, 10.28it/s]

Training loss: 0.227, Change on Adj: -0.007: 28%|██▊ | 275/1000 [00:27<01:10, 10.28it/s]

Training loss: 0.227, Change on Adj: -0.007: 28%|██▊ | 277/1000 [00:27<01:11, 10.10it/s]

Training loss: 0.198, Change on Adj: -0.007: 28%|██▊ | 277/1000 [00:27<01:11, 10.10it/s]

Training loss: 0.257, Change on Adj: -0.007: 28%|██▊ | 277/1000 [00:27<01:11, 10.10it/s]

Training loss: 0.257, Change on Adj: -0.007: 28%|██▊ | 279/1000 [00:27<01:12, 9.97it/s]

Training loss: 0.227, Change on Adj: -0.007: 28%|██▊ | 279/1000 [00:27<01:12, 9.97it/s]

Training loss: 0.240, Change on Adj: -0.007: 28%|██▊ | 279/1000 [00:27<01:12, 9.97it/s]

Training loss: 0.240, Change on Adj: -0.007: 28%|██▊ | 281/1000 [00:27<01:12, 9.87it/s]

Training loss: 0.282, Change on Adj: -0.007: 28%|██▊ | 281/1000 [00:28<01:12, 9.87it/s]

Training loss: 0.282, Change on Adj: -0.007: 28%|██▊ | 282/1000 [00:28<01:12, 9.84it/s]

Training loss: 0.237, Change on Adj: -0.007: 28%|██▊ | 282/1000 [00:28<01:12, 9.84it/s]

Training loss: 0.237, Change on Adj: -0.007: 28%|██▊ | 283/1000 [00:28<01:13, 9.79it/s]

Training loss: 0.233, Change on Adj: -0.007: 28%|██▊ | 283/1000 [00:28<01:13, 9.79it/s]

Training loss: 0.233, Change on Adj: -0.007: 28%|██▊ | 284/1000 [00:28<01:13, 9.72it/s]

Training loss: 0.205, Change on Adj: -0.006: 28%|██▊ | 284/1000 [00:28<01:13, 9.72it/s]

Training loss: 0.205, Change on Adj: -0.006: 28%|██▊ | 285/1000 [00:28<01:13, 9.68it/s]

Training loss: 0.232, Change on Adj: -0.006: 28%|██▊ | 285/1000 [00:28<01:13, 9.68it/s]

Training loss: 0.232, Change on Adj: -0.006: 29%|██▊ | 286/1000 [00:28<01:13, 9.67it/s]

Training loss: 0.248, Change on Adj: -0.006: 29%|██▊ | 286/1000 [00:28<01:13, 9.67it/s]

Training loss: 0.248, Change on Adj: -0.006: 29%|██▊ | 287/1000 [00:28<01:13, 9.64it/s]

Training loss: 0.223, Change on Adj: -0.006: 29%|██▊ | 287/1000 [00:28<01:13, 9.64it/s]

Training loss: 0.223, Change on Adj: -0.006: 29%|██▉ | 288/1000 [00:28<01:13, 9.62it/s]

Training loss: 0.225, Change on Adj: -0.006: 29%|██▉ | 288/1000 [00:28<01:13, 9.62it/s]

Training loss: 0.225, Change on Adj: -0.006: 29%|██▉ | 289/1000 [00:28<01:13, 9.65it/s]

Training loss: 0.240, Change on Adj: -0.006: 29%|██▉ | 289/1000 [00:28<01:13, 9.65it/s]

Training loss: 0.240, Change on Adj: -0.006: 29%|██▉ | 290/1000 [00:28<01:13, 9.63it/s]

Training loss: 0.198, Change on Adj: -0.006: 29%|██▉ | 290/1000 [00:28<01:13, 9.63it/s]

Training loss: 0.198, Change on Adj: -0.006: 29%|██▉ | 291/1000 [00:28<01:13, 9.60it/s]

Training loss: 0.247, Change on Adj: -0.006: 29%|██▉ | 291/1000 [00:29<01:13, 9.60it/s]

Training loss: 0.247, Change on Adj: -0.006: 29%|██▉ | 292/1000 [00:29<01:13, 9.58it/s]

Training loss: 0.229, Change on Adj: -0.006: 29%|██▉ | 292/1000 [00:29<01:13, 9.58it/s]

Training loss: 0.229, Change on Adj: -0.006: 29%|██▉ | 293/1000 [00:29<01:13, 9.60it/s]

Training loss: 0.244, Change on Adj: -0.006: 29%|██▉ | 293/1000 [00:29<01:13, 9.60it/s]

Training loss: 0.244, Change on Adj: -0.006: 29%|██▉ | 294/1000 [00:29<01:13, 9.59it/s]

Training loss: 0.259, Change on Adj: -0.006: 29%|██▉ | 294/1000 [00:29<01:13, 9.59it/s]

Training loss: 0.259, Change on Adj: -0.006: 30%|██▉ | 295/1000 [00:29<01:13, 9.59it/s]

Training loss: 0.180, Change on Adj: -0.006: 30%|██▉ | 295/1000 [00:29<01:13, 9.59it/s]

Training loss: 0.180, Change on Adj: -0.006: 30%|██▉ | 296/1000 [00:29<01:13, 9.63it/s]

Training loss: 0.235, Change on Adj: -0.006: 30%|██▉ | 296/1000 [00:29<01:13, 9.63it/s]

Training loss: 0.235, Change on Adj: -0.006: 30%|██▉ | 297/1000 [00:29<01:13, 9.62it/s]

Training loss: 0.233, Change on Adj: -0.006: 30%|██▉ | 297/1000 [00:29<01:13, 9.62it/s]

Training loss: 0.233, Change on Adj: -0.006: 30%|██▉ | 298/1000 [00:29<01:13, 9.60it/s]

Training loss: 0.263, Change on Adj: -0.006: 30%|██▉ | 298/1000 [00:29<01:13, 9.60it/s]

Training loss: 0.263, Change on Adj: -0.006: 30%|██▉ | 299/1000 [00:29<01:13, 9.57it/s]

Training loss: 0.236, Change on Adj: -0.006: 30%|██▉ | 299/1000 [00:29<01:13, 9.57it/s]

Training loss: 0.236, Change on Adj: -0.006: 30%|███ | 300/1000 [00:29<01:13, 9.58it/s]

Training loss: 0.248, Change on Adj: -0.006: 30%|███ | 300/1000 [00:30<01:13, 9.58it/s]

Training loss: 0.248, Change on Adj: -0.006: 30%|███ | 301/1000 [00:30<01:12, 9.59it/s]

Training loss: 0.222, Change on Adj: -0.006: 30%|███ | 301/1000 [00:30<01:12, 9.59it/s]

Training loss: 0.222, Change on Adj: -0.006: 30%|███ | 302/1000 [00:30<01:12, 9.60it/s]

Training loss: 0.305, Change on Adj: -0.006: 30%|███ | 302/1000 [00:30<01:12, 9.60it/s]

Training loss: 0.305, Change on Adj: -0.006: 30%|███ | 303/1000 [00:30<01:12, 9.62it/s]

Training loss: 0.276, Change on Adj: -0.006: 30%|███ | 303/1000 [00:30<01:12, 9.62it/s]

Training loss: 0.276, Change on Adj: -0.006: 30%|███ | 304/1000 [00:30<01:12, 9.56it/s]

Training loss: 0.269, Change on Adj: -0.006: 30%|███ | 304/1000 [00:30<01:12, 9.56it/s]

Training loss: 0.269, Change on Adj: -0.006: 30%|███ | 305/1000 [00:30<01:12, 9.58it/s]

Training loss: 0.253, Change on Adj: -0.006: 30%|███ | 305/1000 [00:30<01:12, 9.58it/s]

Training loss: 0.253, Change on Adj: -0.006: 31%|███ | 306/1000 [00:30<01:12, 9.54it/s]

Training loss: 0.219, Change on Adj: -0.006: 31%|███ | 306/1000 [00:30<01:12, 9.54it/s]

Training loss: 0.219, Change on Adj: -0.006: 31%|███ | 307/1000 [00:30<01:12, 9.55it/s]

Training loss: 0.241, Change on Adj: -0.005: 31%|███ | 307/1000 [00:30<01:12, 9.55it/s]

Training loss: 0.241, Change on Adj: -0.005: 31%|███ | 308/1000 [00:30<01:12, 9.57it/s]

Training loss: 0.225, Change on Adj: -0.005: 31%|███ | 308/1000 [00:30<01:12, 9.57it/s]

Training loss: 0.225, Change on Adj: -0.005: 31%|███ | 309/1000 [00:30<01:12, 9.54it/s]

Training loss: 0.259, Change on Adj: -0.005: 31%|███ | 309/1000 [00:30<01:12, 9.54it/s]

Training loss: 0.259, Change on Adj: -0.005: 31%|███ | 310/1000 [00:30<01:11, 9.60it/s]

Training loss: 0.226, Change on Adj: -0.005: 31%|███ | 310/1000 [00:31<01:11, 9.60it/s]

Training loss: 0.226, Change on Adj: -0.005: 31%|███ | 311/1000 [00:31<01:11, 9.61it/s]

Training loss: 0.189, Change on Adj: -0.005: 31%|███ | 311/1000 [00:31<01:11, 9.61it/s]

Training loss: 0.189, Change on Adj: -0.005: 31%|███ | 312/1000 [00:31<01:11, 9.61it/s]

Training loss: 0.275, Change on Adj: -0.005: 31%|███ | 312/1000 [00:31<01:11, 9.61it/s]

Training loss: 0.275, Change on Adj: -0.005: 31%|███▏ | 313/1000 [00:31<01:11, 9.59it/s]

Training loss: 0.255, Change on Adj: -0.005: 31%|███▏ | 313/1000 [00:31<01:11, 9.59it/s]

Training loss: 0.255, Change on Adj: -0.005: 31%|███▏ | 314/1000 [00:31<01:11, 9.56it/s]

Training loss: 0.255, Change on Adj: -0.005: 31%|███▏ | 314/1000 [00:31<01:11, 9.56it/s]

Training loss: 0.255, Change on Adj: -0.005: 32%|███▏ | 315/1000 [00:31<01:11, 9.55it/s]

Training loss: 0.221, Change on Adj: -0.005: 32%|███▏ | 315/1000 [00:31<01:11, 9.55it/s]

Training loss: 0.221, Change on Adj: -0.005: 32%|███▏ | 316/1000 [00:31<01:11, 9.53it/s]

Training loss: 0.252, Change on Adj: -0.005: 32%|███▏ | 316/1000 [00:31<01:11, 9.53it/s]

Training loss: 0.252, Change on Adj: -0.005: 32%|███▏ | 317/1000 [00:31<01:11, 9.57it/s]

Training loss: 0.196, Change on Adj: -0.005: 32%|███▏ | 317/1000 [00:31<01:11, 9.57it/s]

Training loss: 0.196, Change on Adj: -0.005: 32%|███▏ | 318/1000 [00:31<01:11, 9.57it/s]

Training loss: 0.250, Change on Adj: -0.005: 32%|███▏ | 318/1000 [00:31<01:11, 9.57it/s]

Training loss: 0.198, Change on Adj: -0.005: 32%|███▏ | 318/1000 [00:31<01:11, 9.57it/s]

Training loss: 0.198, Change on Adj: -0.005: 32%|███▏ | 320/1000 [00:31<01:04, 10.49it/s]

Training loss: 0.205, Change on Adj: -0.005: 32%|███▏ | 320/1000 [00:32<01:04, 10.49it/s]

Training loss: 0.282, Change on Adj: -0.005: 32%|███▏ | 320/1000 [00:32<01:04, 10.49it/s]

Training loss: 0.282, Change on Adj: -0.005: 32%|███▏ | 322/1000 [00:32<01:01, 11.00it/s]

Training loss: 0.220, Change on Adj: -0.005: 32%|███▏ | 322/1000 [00:32<01:01, 11.00it/s]

Training loss: 0.231, Change on Adj: -0.005: 32%|███▏ | 322/1000 [00:32<01:01, 11.00it/s]

Training loss: 0.231, Change on Adj: -0.005: 32%|███▏ | 324/1000 [00:32<01:01, 11.00it/s]

Training loss: 0.217, Change on Adj: -0.005: 32%|███▏ | 324/1000 [00:32<01:01, 11.00it/s]

Training loss: 0.271, Change on Adj: -0.005: 32%|███▏ | 324/1000 [00:32<01:01, 11.00it/s]

Training loss: 0.271, Change on Adj: -0.005: 33%|███▎ | 326/1000 [00:32<01:04, 10.49it/s]

Training loss: 0.236, Change on Adj: -0.005: 33%|███▎ | 326/1000 [00:32<01:04, 10.49it/s]

Training loss: 0.263, Change on Adj: -0.005: 33%|███▎ | 326/1000 [00:32<01:04, 10.49it/s]

Training loss: 0.263, Change on Adj: -0.005: 33%|███▎ | 328/1000 [00:32<01:05, 10.20it/s]

Training loss: 0.216, Change on Adj: -0.005: 33%|███▎ | 328/1000 [00:32<01:05, 10.20it/s]

Training loss: 0.237, Change on Adj: -0.005: 33%|███▎ | 328/1000 [00:32<01:05, 10.20it/s]

Training loss: 0.237, Change on Adj: -0.005: 33%|███▎ | 330/1000 [00:32<01:06, 10.02it/s]

Training loss: 0.227, Change on Adj: -0.004: 33%|███▎ | 330/1000 [00:33<01:06, 10.02it/s]

Training loss: 0.223, Change on Adj: -0.004: 33%|███▎ | 330/1000 [00:33<01:06, 10.02it/s]

Training loss: 0.223, Change on Adj: -0.004: 33%|███▎ | 332/1000 [00:33<01:07, 9.89it/s]

Training loss: 0.203, Change on Adj: -0.004: 33%|███▎ | 332/1000 [00:33<01:07, 9.89it/s]

Training loss: 0.203, Change on Adj: -0.004: 33%|███▎ | 333/1000 [00:33<01:07, 9.84it/s]

Training loss: 0.243, Change on Adj: -0.004: 33%|███▎ | 333/1000 [00:33<01:07, 9.84it/s]

Training loss: 0.243, Change on Adj: -0.004: 33%|███▎ | 334/1000 [00:33<01:08, 9.79it/s]

Training loss: 0.223, Change on Adj: -0.004: 33%|███▎ | 334/1000 [00:33<01:08, 9.79it/s]

Training loss: 0.223, Change on Adj: -0.004: 34%|███▎ | 335/1000 [00:33<01:08, 9.73it/s]

Training loss: 0.220, Change on Adj: -0.004: 34%|███▎ | 335/1000 [00:33<01:08, 9.73it/s]

Training loss: 0.220, Change on Adj: -0.004: 34%|███▎ | 336/1000 [00:33<01:08, 9.69it/s]

Training loss: 0.263, Change on Adj: -0.004: 34%|███▎ | 336/1000 [00:33<01:08, 9.69it/s]

Training loss: 0.263, Change on Adj: -0.004: 34%|███▎ | 337/1000 [00:33<01:08, 9.70it/s]

Training loss: 0.223, Change on Adj: -0.004: 34%|███▎ | 337/1000 [00:33<01:08, 9.70it/s]

Training loss: 0.223, Change on Adj: -0.004: 34%|███▍ | 338/1000 [00:33<01:08, 9.64it/s]

Training loss: 0.243, Change on Adj: -0.004: 34%|███▍ | 338/1000 [00:33<01:08, 9.64it/s]

Training loss: 0.243, Change on Adj: -0.004: 34%|███▍ | 339/1000 [00:33<01:08, 9.66it/s]

Training loss: 0.240, Change on Adj: -0.004: 34%|███▍ | 339/1000 [00:33<01:08, 9.66it/s]

Training loss: 0.240, Change on Adj: -0.004: 34%|███▍ | 340/1000 [00:33<01:08, 9.67it/s]

Training loss: 0.258, Change on Adj: -0.004: 34%|███▍ | 340/1000 [00:34<01:08, 9.67it/s]

Training loss: 0.258, Change on Adj: -0.004: 34%|███▍ | 341/1000 [00:34<01:08, 9.66it/s]

Training loss: 0.226, Change on Adj: -0.004: 34%|███▍ | 341/1000 [00:34<01:08, 9.66it/s]

Training loss: 0.226, Change on Adj: -0.004: 34%|███▍ | 342/1000 [00:34<01:07, 9.68it/s]

Training loss: 0.242, Change on Adj: -0.004: 34%|███▍ | 342/1000 [00:34<01:07, 9.68it/s]

Training loss: 0.242, Change on Adj: -0.004: 34%|███▍ | 343/1000 [00:34<01:07, 9.66it/s]

Training loss: 0.298, Change on Adj: -0.004: 34%|███▍ | 343/1000 [00:34<01:07, 9.66it/s]

Training loss: 0.298, Change on Adj: -0.004: 34%|███▍ | 344/1000 [00:34<01:07, 9.68it/s]

Training loss: 0.285, Change on Adj: -0.004: 34%|███▍ | 344/1000 [00:34<01:07, 9.68it/s]

Training loss: 0.285, Change on Adj: -0.004: 34%|███▍ | 345/1000 [00:34<01:07, 9.66it/s]

Training loss: 0.179, Change on Adj: -0.004: 34%|███▍ | 345/1000 [00:34<01:07, 9.66it/s]

Training loss: 0.179, Change on Adj: -0.004: 35%|███▍ | 346/1000 [00:34<01:07, 9.63it/s]

Training loss: 0.206, Change on Adj: -0.004: 35%|███▍ | 346/1000 [00:34<01:07, 9.63it/s]

Training loss: 0.206, Change on Adj: -0.004: 35%|███▍ | 347/1000 [00:34<01:07, 9.64it/s]

Training loss: 0.270, Change on Adj: -0.004: 35%|███▍ | 347/1000 [00:34<01:07, 9.64it/s]

Training loss: 0.270, Change on Adj: -0.004: 35%|███▍ | 348/1000 [00:34<01:07, 9.63it/s]

Training loss: 0.220, Change on Adj: -0.004: 35%|███▍ | 348/1000 [00:34<01:07, 9.63it/s]

Training loss: 0.220, Change on Adj: -0.004: 35%|███▍ | 349/1000 [00:34<01:07, 9.63it/s]

Training loss: 0.280, Change on Adj: -0.004: 35%|███▍ | 349/1000 [00:34<01:07, 9.63it/s]

Training loss: 0.280, Change on Adj: -0.004: 35%|███▌ | 350/1000 [00:34<01:07, 9.60it/s]

Training loss: 0.178, Change on Adj: -0.004: 35%|███▌ | 350/1000 [00:35<01:07, 9.60it/s]

Training loss: 0.178, Change on Adj: -0.004: 35%|███▌ | 351/1000 [00:35<01:07, 9.62it/s]

Training loss: 0.240, Change on Adj: -0.004: 35%|███▌ | 351/1000 [00:35<01:07, 9.62it/s]

Training loss: 0.240, Change on Adj: -0.004: 35%|███▌ | 352/1000 [00:35<01:07, 9.59it/s]

Training loss: 0.242, Change on Adj: -0.004: 35%|███▌ | 352/1000 [00:35<01:07, 9.59it/s]

Training loss: 0.242, Change on Adj: -0.004: 35%|███▌ | 353/1000 [00:35<01:07, 9.61it/s]

Training loss: 0.280, Change on Adj: -0.004: 35%|███▌ | 353/1000 [00:35<01:07, 9.61it/s]

Training loss: 0.280, Change on Adj: -0.004: 35%|███▌ | 354/1000 [00:35<01:07, 9.58it/s]

Training loss: 0.185, Change on Adj: -0.004: 35%|███▌ | 354/1000 [00:35<01:07, 9.58it/s]

Training loss: 0.185, Change on Adj: -0.004: 36%|███▌ | 355/1000 [00:35<01:07, 9.61it/s]

Training loss: 0.203, Change on Adj: -0.004: 36%|███▌ | 355/1000 [00:35<01:07, 9.61it/s]

Training loss: 0.203, Change on Adj: -0.004: 36%|███▌ | 356/1000 [00:35<01:07, 9.57it/s]

Training loss: 0.287, Change on Adj: -0.003: 36%|███▌ | 356/1000 [00:35<01:07, 9.57it/s]

Training loss: 0.287, Change on Adj: -0.003: 36%|███▌ | 357/1000 [00:35<01:07, 9.59it/s]

Training loss: 0.227, Change on Adj: -0.003: 36%|███▌ | 357/1000 [00:35<01:07, 9.59it/s]

Training loss: 0.227, Change on Adj: -0.003: 36%|███▌ | 358/1000 [00:35<01:06, 9.63it/s]

Training loss: 0.239, Change on Adj: -0.003: 36%|███▌ | 358/1000 [00:35<01:06, 9.63it/s]

Training loss: 0.239, Change on Adj: -0.003: 36%|███▌ | 359/1000 [00:35<01:06, 9.60it/s]

Training loss: 0.238, Change on Adj: -0.003: 36%|███▌ | 359/1000 [00:36<01:06, 9.60it/s]

Training loss: 0.238, Change on Adj: -0.003: 36%|███▌ | 360/1000 [00:36<01:06, 9.58it/s]

Training loss: 0.253, Change on Adj: -0.003: 36%|███▌ | 360/1000 [00:36<01:06, 9.58it/s]

Training loss: 0.253, Change on Adj: -0.003: 36%|███▌ | 361/1000 [00:36<01:06, 9.58it/s]

Training loss: 0.255, Change on Adj: -0.003: 36%|███▌ | 361/1000 [00:36<01:06, 9.58it/s]

Training loss: 0.255, Change on Adj: -0.003: 36%|███▌ | 362/1000 [00:36<01:06, 9.58it/s]

Training loss: 0.184, Change on Adj: -0.003: 36%|███▌ | 362/1000 [00:36<01:06, 9.58it/s]

Training loss: 0.184, Change on Adj: -0.003: 36%|███▋ | 363/1000 [00:36<01:06, 9.53it/s]

Training loss: 0.256, Change on Adj: -0.003: 36%|███▋ | 363/1000 [00:36<01:06, 9.53it/s]

Training loss: 0.256, Change on Adj: -0.003: 36%|███▋ | 364/1000 [00:36<01:06, 9.53it/s]

Training loss: 0.259, Change on Adj: -0.003: 36%|███▋ | 364/1000 [00:36<01:06, 9.53it/s]

Training loss: 0.259, Change on Adj: -0.003: 36%|███▋ | 365/1000 [00:36<01:06, 9.57it/s]

Training loss: 0.247, Change on Adj: -0.003: 36%|███▋ | 365/1000 [00:36<01:06, 9.57it/s]

Training loss: 0.247, Change on Adj: -0.003: 37%|███▋ | 366/1000 [00:36<01:06, 9.56it/s]

Training loss: 0.254, Change on Adj: -0.003: 37%|███▋ | 366/1000 [00:36<01:06, 9.56it/s]

Training loss: 0.254, Change on Adj: -0.003: 37%|███▋ | 367/1000 [00:36<01:06, 9.57it/s]

Training loss: 0.192, Change on Adj: -0.003: 37%|███▋ | 367/1000 [00:36<01:06, 9.57it/s]

Training loss: 0.192, Change on Adj: -0.003: 37%|███▋ | 368/1000 [00:36<01:05, 9.58it/s]

Training loss: 0.253, Change on Adj: -0.003: 37%|███▋ | 368/1000 [00:36<01:05, 9.58it/s]

Training loss: 0.253, Change on Adj: -0.003: 37%|███▋ | 369/1000 [00:36<01:05, 9.60it/s]

Training loss: 0.258, Change on Adj: -0.003: 37%|███▋ | 369/1000 [00:37<01:05, 9.60it/s]

Training loss: 0.258, Change on Adj: -0.003: 37%|███▋ | 370/1000 [00:37<01:05, 9.61it/s]

Training loss: 0.244, Change on Adj: -0.003: 37%|███▋ | 370/1000 [00:37<01:05, 9.61it/s]

Training loss: 0.244, Change on Adj: -0.003: 37%|███▋ | 371/1000 [00:37<01:05, 9.61it/s]

Training loss: 0.201, Change on Adj: -0.003: 37%|███▋ | 371/1000 [00:37<01:05, 9.61it/s]

Training loss: 0.201, Change on Adj: -0.003: 37%|███▋ | 372/1000 [00:37<01:04, 9.68it/s]

Training loss: 0.221, Change on Adj: -0.003: 37%|███▋ | 372/1000 [00:37<01:04, 9.68it/s]

Training loss: 0.221, Change on Adj: -0.003: 37%|███▋ | 373/1000 [00:37<01:04, 9.70it/s]

Training loss: 0.210, Change on Adj: -0.003: 37%|███▋ | 373/1000 [00:37<01:04, 9.70it/s]

Training loss: 0.210, Change on Adj: -0.003: 37%|███▋ | 374/1000 [00:37<01:04, 9.67it/s]

Training loss: 0.207, Change on Adj: -0.003: 37%|███▋ | 374/1000 [00:37<01:04, 9.67it/s]

Training loss: 0.207, Change on Adj: -0.003: 38%|███▊ | 375/1000 [00:37<01:04, 9.66it/s]

Training loss: 0.232, Change on Adj: -0.003: 38%|███▊ | 375/1000 [00:37<01:04, 9.66it/s]

Training loss: 0.232, Change on Adj: -0.003: 38%|███▊ | 376/1000 [00:37<01:04, 9.64it/s]

Training loss: 0.280, Change on Adj: -0.003: 38%|███▊ | 376/1000 [00:37<01:04, 9.64it/s]

Training loss: 0.280, Change on Adj: -0.003: 38%|███▊ | 377/1000 [00:37<01:04, 9.66it/s]

Training loss: 0.227, Change on Adj: -0.003: 38%|███▊ | 377/1000 [00:37<01:04, 9.66it/s]

Training loss: 0.227, Change on Adj: -0.003: 38%|███▊ | 378/1000 [00:37<01:04, 9.64it/s]

Training loss: 0.217, Change on Adj: -0.003: 38%|███▊ | 378/1000 [00:38<01:04, 9.64it/s]

Training loss: 0.217, Change on Adj: -0.003: 38%|███▊ | 379/1000 [00:38<01:04, 9.63it/s]

Training loss: 0.209, Change on Adj: -0.003: 38%|███▊ | 379/1000 [00:38<01:04, 9.63it/s]

Training loss: 0.209, Change on Adj: -0.003: 38%|███▊ | 380/1000 [00:38<01:04, 9.62it/s]

Training loss: 0.205, Change on Adj: -0.003: 38%|███▊ | 380/1000 [00:38<01:04, 9.62it/s]

Training loss: 0.205, Change on Adj: -0.003: 38%|███▊ | 381/1000 [00:38<01:04, 9.63it/s]

Training loss: 0.231, Change on Adj: -0.003: 38%|███▊ | 381/1000 [00:38<01:04, 9.63it/s]

Training loss: 0.231, Change on Adj: -0.003: 38%|███▊ | 382/1000 [00:38<01:04, 9.58it/s]

Training loss: 0.252, Change on Adj: -0.003: 38%|███▊ | 382/1000 [00:38<01:04, 9.58it/s]

Training loss: 0.252, Change on Adj: -0.003: 38%|███▊ | 383/1000 [00:38<01:04, 9.59it/s]

Training loss: 0.199, Change on Adj: -0.003: 38%|███▊ | 383/1000 [00:38<01:04, 9.59it/s]

Training loss: 0.199, Change on Adj: -0.003: 38%|███▊ | 384/1000 [00:38<01:03, 9.65it/s]

Training loss: 0.230, Change on Adj: -0.003: 38%|███▊ | 384/1000 [00:38<01:03, 9.65it/s]

Training loss: 0.230, Change on Adj: -0.003: 38%|███▊ | 385/1000 [00:38<01:04, 9.58it/s]

Training loss: 0.221, Change on Adj: -0.003: 38%|███▊ | 385/1000 [00:38<01:04, 9.58it/s]

Training loss: 0.221, Change on Adj: -0.003: 39%|███▊ | 386/1000 [00:38<01:03, 9.60it/s]

Training loss: 0.237, Change on Adj: -0.003: 39%|███▊ | 386/1000 [00:38<01:03, 9.60it/s]

Training loss: 0.237, Change on Adj: -0.003: 39%|███▊ | 387/1000 [00:38<01:03, 9.59it/s]

Training loss: 0.196, Change on Adj: -0.003: 39%|███▊ | 387/1000 [00:38<01:03, 9.59it/s]

Training loss: 0.196, Change on Adj: -0.003: 39%|███▉ | 388/1000 [00:38<01:03, 9.56it/s]

Training loss: 0.181, Change on Adj: -0.003: 39%|███▉ | 388/1000 [00:39<01:03, 9.56it/s]

Training loss: 0.181, Change on Adj: -0.003: 39%|███▉ | 389/1000 [00:39<01:03, 9.55it/s]

Training loss: 0.235, Change on Adj: -0.003: 39%|███▉ | 389/1000 [00:39<01:03, 9.55it/s]

Training loss: 0.235, Change on Adj: -0.003: 39%|███▉ | 390/1000 [00:39<01:03, 9.60it/s]

Training loss: 0.226, Change on Adj: -0.003: 39%|███▉ | 390/1000 [00:39<01:03, 9.60it/s]

Training loss: 0.226, Change on Adj: -0.003: 39%|███▉ | 391/1000 [00:39<01:03, 9.61it/s]

Training loss: 0.231, Change on Adj: -0.003: 39%|███▉ | 391/1000 [00:39<01:03, 9.61it/s]

Training loss: 0.231, Change on Adj: -0.003: 39%|███▉ | 392/1000 [00:39<01:03, 9.60it/s]

Training loss: 0.218, Change on Adj: -0.003: 39%|███▉ | 392/1000 [00:39<01:03, 9.60it/s]

Training loss: 0.218, Change on Adj: -0.003: 39%|███▉ | 393/1000 [00:39<01:03, 9.55it/s]

Training loss: 0.286, Change on Adj: -0.003: 39%|███▉ | 393/1000 [00:39<01:03, 9.55it/s]

Training loss: 0.286, Change on Adj: -0.003: 39%|███▉ | 394/1000 [00:39<01:03, 9.52it/s]

Training loss: 0.248, Change on Adj: -0.003: 39%|███▉ | 394/1000 [00:39<01:03, 9.52it/s]

Training loss: 0.248, Change on Adj: -0.003: 40%|███▉ | 395/1000 [00:39<01:03, 9.50it/s]

Training loss: 0.279, Change on Adj: -0.002: 40%|███▉ | 395/1000 [00:39<01:03, 9.50it/s]

Training loss: 0.279, Change on Adj: -0.002: 40%|███▉ | 396/1000 [00:39<01:03, 9.53it/s]

Training loss: 0.191, Change on Adj: -0.002: 40%|███▉ | 396/1000 [00:39<01:03, 9.53it/s]

Training loss: 0.191, Change on Adj: -0.002: 40%|███▉ | 397/1000 [00:39<01:03, 9.54it/s]

Training loss: 0.199, Change on Adj: -0.002: 40%|███▉ | 397/1000 [00:40<01:03, 9.54it/s]

Training loss: 0.199, Change on Adj: -0.002: 40%|███▉ | 398/1000 [00:40<01:03, 9.54it/s]

Training loss: 0.254, Change on Adj: -0.002: 40%|███▉ | 398/1000 [00:40<01:03, 9.54it/s]

Training loss: 0.254, Change on Adj: -0.002: 40%|███▉ | 399/1000 [00:40<01:03, 9.49it/s]

Training loss: 0.198, Change on Adj: -0.002: 40%|███▉ | 399/1000 [00:40<01:03, 9.49it/s]

Training loss: 0.198, Change on Adj: -0.002: 40%|████ | 400/1000 [00:40<01:02, 9.56it/s]

Training loss: 0.188, Change on Adj: -0.002: 40%|████ | 400/1000 [00:40<01:02, 9.56it/s]

Training loss: 0.188, Change on Adj: -0.002: 40%|████ | 401/1000 [00:40<01:02, 9.57it/s]

Training loss: 0.191, Change on Adj: -0.002: 40%|████ | 401/1000 [00:40<01:02, 9.57it/s]

Training loss: 0.191, Change on Adj: -0.002: 40%|████ | 402/1000 [00:40<01:02, 9.56it/s]

Training loss: 0.216, Change on Adj: -0.002: 40%|████ | 402/1000 [00:40<01:02, 9.56it/s]

Training loss: 0.216, Change on Adj: -0.002: 40%|████ | 403/1000 [00:40<01:02, 9.55it/s]

Training loss: 0.278, Change on Adj: -0.002: 40%|████ | 403/1000 [00:40<01:02, 9.55it/s]

Training loss: 0.278, Change on Adj: -0.002: 40%|████ | 404/1000 [00:40<01:02, 9.60it/s]

Training loss: 0.245, Change on Adj: -0.002: 40%|████ | 404/1000 [00:40<01:02, 9.60it/s]

Training loss: 0.245, Change on Adj: -0.002: 40%|████ | 405/1000 [00:40<01:02, 9.58it/s]

Training loss: 0.255, Change on Adj: -0.002: 40%|████ | 405/1000 [00:40<01:02, 9.58it/s]

Training loss: 0.255, Change on Adj: -0.002: 41%|████ | 406/1000 [00:40<01:02, 9.57it/s]

Training loss: 0.208, Change on Adj: -0.002: 41%|████ | 406/1000 [00:40<01:02, 9.57it/s]

Training loss: 0.208, Change on Adj: -0.002: 41%|████ | 407/1000 [00:40<01:02, 9.55it/s]

Training loss: 0.216, Change on Adj: -0.002: 41%|████ | 407/1000 [00:41<01:02, 9.55it/s]

Training loss: 0.216, Change on Adj: -0.002: 41%|████ | 408/1000 [00:41<01:02, 9.53it/s]

Training loss: 0.192, Change on Adj: -0.002: 41%|████ | 408/1000 [00:41<01:02, 9.53it/s]

Training loss: 0.192, Change on Adj: -0.002: 41%|████ | 409/1000 [00:41<01:01, 9.58it/s]

Training loss: 0.204, Change on Adj: -0.002: 41%|████ | 409/1000 [00:41<01:01, 9.58it/s]

Training loss: 0.204, Change on Adj: -0.002: 41%|████ | 410/1000 [00:41<01:01, 9.56it/s]

Training loss: 0.224, Change on Adj: -0.002: 41%|████ | 410/1000 [00:41<01:01, 9.56it/s]

Training loss: 0.224, Change on Adj: -0.002: 41%|████ | 411/1000 [00:41<01:01, 9.56it/s]

Training loss: 0.227, Change on Adj: -0.002: 41%|████ | 411/1000 [00:41<01:01, 9.56it/s]

Training loss: 0.227, Change on Adj: -0.002: 41%|████ | 412/1000 [00:41<01:01, 9.53it/s]

Training loss: 0.199, Change on Adj: -0.002: 41%|████ | 412/1000 [00:41<01:01, 9.53it/s]

Training loss: 0.199, Change on Adj: -0.002: 41%|████▏ | 413/1000 [00:41<01:01, 9.57it/s]

Training loss: 0.221, Change on Adj: -0.002: 41%|████▏ | 413/1000 [00:41<01:01, 9.57it/s]

Training loss: 0.221, Change on Adj: -0.002: 41%|████▏ | 414/1000 [00:41<01:01, 9.53it/s]

Training loss: 0.205, Change on Adj: -0.002: 41%|████▏ | 414/1000 [00:41<01:01, 9.53it/s]

Training loss: 0.205, Change on Adj: -0.002: 42%|████▏ | 415/1000 [00:41<01:01, 9.49it/s]

Training loss: 0.267, Change on Adj: -0.002: 42%|████▏ | 415/1000 [00:41<01:01, 9.49it/s]

Training loss: 0.267, Change on Adj: -0.002: 42%|████▏ | 416/1000 [00:41<01:01, 9.53it/s]

Training loss: 0.246, Change on Adj: -0.002: 42%|████▏ | 416/1000 [00:41<01:01, 9.53it/s]

Training loss: 0.246, Change on Adj: -0.002: 42%|████▏ | 417/1000 [00:41<01:00, 9.58it/s]

Training loss: 0.260, Change on Adj: -0.002: 42%|████▏ | 417/1000 [00:42<01:00, 9.58it/s]

Training loss: 0.260, Change on Adj: -0.002: 42%|████▏ | 418/1000 [00:42<01:01, 9.54it/s]

Training loss: 0.215, Change on Adj: -0.002: 42%|████▏ | 418/1000 [00:42<01:01, 9.54it/s]

Training loss: 0.215, Change on Adj: -0.002: 42%|████▏ | 419/1000 [00:42<01:00, 9.53it/s]

Training loss: 0.215, Change on Adj: -0.002: 42%|████▏ | 419/1000 [00:42<01:00, 9.53it/s]

Training loss: 0.215, Change on Adj: -0.002: 42%|████▏ | 420/1000 [00:42<01:00, 9.54it/s]

Training loss: 0.203, Change on Adj: -0.002: 42%|████▏ | 420/1000 [00:42<01:00, 9.54it/s]

Training loss: 0.203, Change on Adj: -0.002: 42%|████▏ | 421/1000 [00:42<01:00, 9.50it/s]

Training loss: 0.230, Change on Adj: -0.002: 42%|████▏ | 421/1000 [00:42<01:00, 9.50it/s]

Training loss: 0.230, Change on Adj: -0.002: 42%|████▏ | 422/1000 [00:42<01:00, 9.53it/s]

Training loss: 0.246, Change on Adj: -0.002: 42%|████▏ | 422/1000 [00:42<01:00, 9.53it/s]

Training loss: 0.246, Change on Adj: -0.002: 42%|████▏ | 423/1000 [00:42<01:00, 9.57it/s]

Training loss: 0.174, Change on Adj: -0.002: 42%|████▏ | 423/1000 [00:42<01:00, 9.57it/s]

Training loss: 0.174, Change on Adj: -0.002: 42%|████▏ | 424/1000 [00:42<01:00, 9.58it/s]

Training loss: 0.236, Change on Adj: -0.002: 42%|████▏ | 424/1000 [00:42<01:00, 9.58it/s]

Training loss: 0.236, Change on Adj: -0.002: 42%|████▎ | 425/1000 [00:42<01:00, 9.53it/s]

Training loss: 0.277, Change on Adj: -0.002: 42%|████▎ | 425/1000 [00:42<01:00, 9.53it/s]

Training loss: 0.277, Change on Adj: -0.002: 43%|████▎ | 426/1000 [00:42<01:00, 9.51it/s]

Training loss: 0.286, Change on Adj: -0.002: 43%|████▎ | 426/1000 [00:43<01:00, 9.51it/s]

Training loss: 0.286, Change on Adj: -0.002: 43%|████▎ | 427/1000 [00:43<01:00, 9.51it/s]

Training loss: 0.222, Change on Adj: -0.002: 43%|████▎ | 427/1000 [00:43<01:00, 9.51it/s]

Training loss: 0.222, Change on Adj: -0.002: 43%|████▎ | 428/1000 [00:43<01:00, 9.49it/s]

Training loss: 0.266, Change on Adj: -0.002: 43%|████▎ | 428/1000 [00:43<01:00, 9.49it/s]

Training loss: 0.266, Change on Adj: -0.002: 43%|████▎ | 429/1000 [00:43<00:59, 9.52it/s]

Training loss: 0.237, Change on Adj: -0.002: 43%|████▎ | 429/1000 [00:43<00:59, 9.52it/s]

Training loss: 0.237, Change on Adj: -0.002: 43%|████▎ | 430/1000 [00:43<01:00, 9.43it/s]

Training loss: 0.313, Change on Adj: -0.002: 43%|████▎ | 430/1000 [00:43<01:00, 9.43it/s]

Training loss: 0.313, Change on Adj: -0.002: 43%|████▎ | 431/1000 [00:43<01:00, 9.45it/s]

Training loss: 0.278, Change on Adj: -0.002: 43%|████▎ | 431/1000 [00:43<01:00, 9.45it/s]

Training loss: 0.278, Change on Adj: -0.002: 43%|████▎ | 432/1000 [00:43<01:00, 9.46it/s]

Training loss: 0.232, Change on Adj: -0.002: 43%|████▎ | 432/1000 [00:43<01:00, 9.46it/s]

Training loss: 0.232, Change on Adj: -0.002: 43%|████▎ | 433/1000 [00:43<00:59, 9.53it/s]

Training loss: 0.257, Change on Adj: -0.002: 43%|████▎ | 433/1000 [00:43<00:59, 9.53it/s]

Training loss: 0.257, Change on Adj: -0.002: 43%|████▎ | 434/1000 [00:43<00:59, 9.50it/s]

Training loss: 0.218, Change on Adj: -0.002: 43%|████▎ | 434/1000 [00:43<00:59, 9.50it/s]

Training loss: 0.218, Change on Adj: -0.002: 44%|████▎ | 435/1000 [00:43<00:59, 9.54it/s]

Training loss: 0.257, Change on Adj: -0.002: 44%|████▎ | 435/1000 [00:43<00:59, 9.54it/s]

Training loss: 0.257, Change on Adj: -0.002: 44%|████▎ | 436/1000 [00:43<00:59, 9.55it/s]

Training loss: 0.219, Change on Adj: -0.002: 44%|████▎ | 436/1000 [00:44<00:59, 9.55it/s]

Training loss: 0.219, Change on Adj: -0.002: 44%|████▎ | 437/1000 [00:44<00:58, 9.55it/s]

Training loss: 0.215, Change on Adj: -0.002: 44%|████▎ | 437/1000 [00:44<00:58, 9.55it/s]

Training loss: 0.215, Change on Adj: -0.002: 44%|████▍ | 438/1000 [00:44<00:58, 9.53it/s]

Training loss: 0.174, Change on Adj: -0.002: 44%|████▍ | 438/1000 [00:44<00:58, 9.53it/s]

Training loss: 0.174, Change on Adj: -0.002: 44%|████▍ | 439/1000 [00:44<00:58, 9.54it/s]

Training loss: 0.190, Change on Adj: -0.002: 44%|████▍ | 439/1000 [00:44<00:58, 9.54it/s]

Training loss: 0.190, Change on Adj: -0.002: 44%|████▍ | 440/1000 [00:44<00:58, 9.55it/s]

Training loss: 0.236, Change on Adj: -0.002: 44%|████▍ | 440/1000 [00:44<00:58, 9.55it/s]

Training loss: 0.236, Change on Adj: -0.002: 44%|████▍ | 441/1000 [00:44<00:58, 9.57it/s]

Training loss: 0.245, Change on Adj: -0.002: 44%|████▍ | 441/1000 [00:44<00:58, 9.57it/s]

Training loss: 0.245, Change on Adj: -0.002: 44%|████▍ | 442/1000 [00:44<00:58, 9.58it/s]

Training loss: 0.248, Change on Adj: -0.002: 44%|████▍ | 442/1000 [00:44<00:58, 9.58it/s]

Training loss: 0.248, Change on Adj: -0.002: 44%|████▍ | 443/1000 [00:44<00:58, 9.54it/s]

Training loss: 0.218, Change on Adj: -0.002: 44%|████▍ | 443/1000 [00:44<00:58, 9.54it/s]

Training loss: 0.218, Change on Adj: -0.002: 44%|████▍ | 444/1000 [00:44<00:58, 9.55it/s]

Training loss: 0.250, Change on Adj: -0.002: 44%|████▍ | 444/1000 [00:44<00:58, 9.55it/s]

Training loss: 0.250, Change on Adj: -0.002: 44%|████▍ | 445/1000 [00:44<00:58, 9.52it/s]

Training loss: 0.212, Change on Adj: -0.002: 44%|████▍ | 445/1000 [00:45<00:58, 9.52it/s]

Training loss: 0.212, Change on Adj: -0.002: 45%|████▍ | 446/1000 [00:45<00:58, 9.52it/s]

Training loss: 0.237, Change on Adj: -0.002: 45%|████▍ | 446/1000 [00:45<00:58, 9.52it/s]

Training loss: 0.237, Change on Adj: -0.002: 45%|████▍ | 447/1000 [00:45<00:58, 9.51it/s]

Training loss: 0.250, Change on Adj: -0.002: 45%|████▍ | 447/1000 [00:45<00:58, 9.51it/s]

Training loss: 0.250, Change on Adj: -0.002: 45%|████▍ | 448/1000 [00:45<00:57, 9.53it/s]

Training loss: 0.202, Change on Adj: -0.002: 45%|████▍ | 448/1000 [00:45<00:57, 9.53it/s]

Training loss: 0.202, Change on Adj: -0.002: 45%|████▍ | 449/1000 [00:45<00:58, 9.50it/s]

Training loss: 0.245, Change on Adj: -0.002: 45%|████▍ | 449/1000 [00:45<00:58, 9.50it/s]

Training loss: 0.245, Change on Adj: -0.002: 45%|████▌ | 450/1000 [00:45<00:57, 9.51it/s]

Training loss: 0.205, Change on Adj: -0.002: 45%|████▌ | 450/1000 [00:45<00:57, 9.51it/s]

Training loss: 0.205, Change on Adj: -0.002: 45%|████▌ | 451/1000 [00:45<00:57, 9.50it/s]

Training loss: 0.245, Change on Adj: -0.002: 45%|████▌ | 451/1000 [00:45<00:57, 9.50it/s]

Training loss: 0.245, Change on Adj: -0.002: 45%|████▌ | 452/1000 [00:45<00:57, 9.51it/s]

Training loss: 0.218, Change on Adj: -0.002: 45%|████▌ | 452/1000 [00:45<00:57, 9.51it/s]

Training loss: 0.218, Change on Adj: -0.002: 45%|████▌ | 453/1000 [00:45<00:57, 9.53it/s]

Training loss: 0.188, Change on Adj: -0.002: 45%|████▌ | 453/1000 [00:45<00:57, 9.53it/s]

Training loss: 0.188, Change on Adj: -0.002: 45%|████▌ | 454/1000 [00:45<00:57, 9.51it/s]

Training loss: 0.234, Change on Adj: -0.002: 45%|████▌ | 454/1000 [00:45<00:57, 9.51it/s]

Training loss: 0.234, Change on Adj: -0.002: 46%|████▌ | 455/1000 [00:45<00:57, 9.52it/s]

Training loss: 0.229, Change on Adj: -0.002: 46%|████▌ | 455/1000 [00:46<00:57, 9.52it/s]

Training loss: 0.229, Change on Adj: -0.002: 46%|████▌ | 456/1000 [00:46<00:57, 9.53it/s]

Training loss: 0.181, Change on Adj: -0.002: 46%|████▌ | 456/1000 [00:46<00:57, 9.53it/s]

Training loss: 0.181, Change on Adj: -0.002: 46%|████▌ | 457/1000 [00:46<00:56, 9.53it/s]

Training loss: 0.251, Change on Adj: -0.002: 46%|████▌ | 457/1000 [00:46<00:56, 9.53it/s]

Training loss: 0.251, Change on Adj: -0.002: 46%|████▌ | 458/1000 [00:46<00:57, 9.50it/s]

Training loss: 0.205, Change on Adj: -0.002: 46%|████▌ | 458/1000 [00:46<00:57, 9.50it/s]

Training loss: 0.205, Change on Adj: -0.002: 46%|████▌ | 459/1000 [00:46<00:56, 9.50it/s]

Training loss: 0.234, Change on Adj: -0.002: 46%|████▌ | 459/1000 [00:46<00:56, 9.50it/s]

Training loss: 0.234, Change on Adj: -0.002: 46%|████▌ | 460/1000 [00:46<00:56, 9.49it/s]

Training loss: 0.255, Change on Adj: -0.002: 46%|████▌ | 460/1000 [00:46<00:56, 9.49it/s]

Training loss: 0.255, Change on Adj: -0.002: 46%|████▌ | 461/1000 [00:46<00:56, 9.49it/s]

Training loss: 0.203, Change on Adj: -0.002: 46%|████▌ | 461/1000 [00:46<00:56, 9.49it/s]

Training loss: 0.203, Change on Adj: -0.002: 46%|████▌ | 462/1000 [00:46<00:56, 9.47it/s]

Training loss: 0.267, Change on Adj: -0.002: 46%|████▌ | 462/1000 [00:46<00:56, 9.47it/s]

Training loss: 0.267, Change on Adj: -0.002: 46%|████▋ | 463/1000 [00:46<00:56, 9.51it/s]

Training loss: 0.209, Change on Adj: -0.002: 46%|████▋ | 463/1000 [00:46<00:56, 9.51it/s]

Training loss: 0.209, Change on Adj: -0.002: 46%|████▋ | 464/1000 [00:46<00:56, 9.50it/s]

Training loss: 0.250, Change on Adj: -0.002: 46%|████▋ | 464/1000 [00:47<00:56, 9.50it/s]

Training loss: 0.250, Change on Adj: -0.002: 46%|████▋ | 465/1000 [00:47<00:56, 9.51it/s]

Training loss: 0.263, Change on Adj: -0.002: 46%|████▋ | 465/1000 [00:47<00:56, 9.51it/s]